CSOUND

INTRODUCTION

LEASE READ THE NEW VERSION OF THE CSOUND FLOSS MANUAL HERE:

https://flossmanual.csound.com/

THIS VERSION HAS REVISED TEXT AND ALL EXAMPLES CAN BE EXECUTED IN THE BROWSER!

| Read the Online Version |

Read the EPUB Version |

| Read the PDF Version |

Read in Open Office |

Csound is one of the best known and longest established programs in the field of audio programming. It was developed in the mid-1980s at the Massachusetts Institute of Technology (MIT) by Barry Vercoe but Csound's history lies even deeper within the roots of computer music: it is a direct descendant of the oldest computer program for sound synthesis, 'MusicN', by Max Mathews. Csound is free and open source, distributed under the LGPL licence, and it is maintained and expanded by a core of developers with support from a wider global community.

Csound has been growing for 30 years. There is rarely anything related to audio that you cannot do with Csound. You can work by rendering offline, or in real-time by processing live audio and synthesizing sound on the fly. You can control Csound via MIDI, OSC, through a network, within a browser or via the Csound API (Application Programming Interface). Csound will run on all major platforms, on phones, tablets and tinyware computers. In Csound you will find the widest collection of tools for sound synthesis and sound modification, arguably offering a superset of features offered by similar software and with an unrivaled audio precision.

Csound is simultaneously both 'old school' and 'new school'.

Is Csound difficult to learn? Generally speaking, graphical audio programming languages like Pure Data,1 Max or Reaktor are easier to learn than text-coded audio programming languages such as Csound or SuperCollider. In Pd, Max or Reaktor you cannot make a typo which produces an error that you do not understand. You program without being aware that you are programming. The user experience mirrors that of patching together various devices in a studio. This is a fantastically intuitive approach but when you deal with more complex projects, a text-based programming language is often easier to use and debug, and many people prefer to program by typing words and sentences rather than by wiring symbols together using the mouse.

Yet Csound can straddle both approaches: it is also very easy to use Csound as an audio engine inside Pd or Max. Have a look at the chapter Csound in Other Applications for further information.

Amongst text-based audio programming languages, Csound is arguably the simplest. You do not need to know any specific programming techniques or to be a computer scientist. The basics of the Csound language are a straightforward transfer of the signal flow paradigm to text.

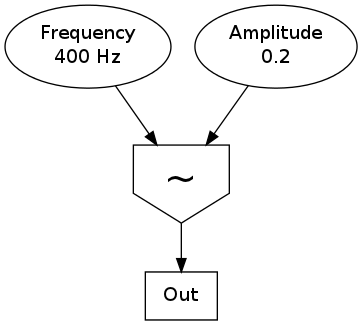

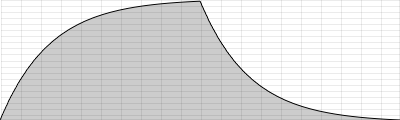

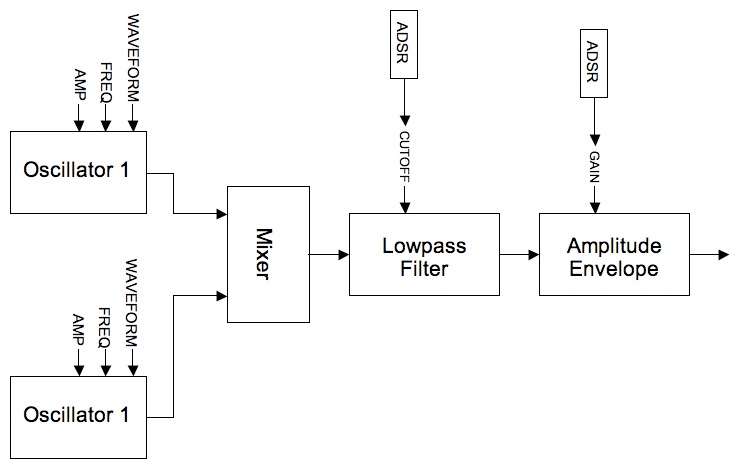

For example, to create a 400 Hz sine oscillator with an amplitude of 0.2, this is the signal flow:

Here is a possible transformation of the signal graph into Csound code:

instr Sine

aSig poscil 0.2, 400

out aSig

endin

The oscillator is represented by the opcode poscil and receives its input arguments on the right-hand side. These are amplitude (0.2) and frequency (400). It produces an audio signal called aSig at the left side which is in turn the input of the second opcode out. The first and last lines encase these connections inside an instrument called Sine.

With the release of Csound version 6, it is possible to write the same code in an even more condensed fashion using so-called "functional syntax", as shown below:2

instr Sine

out poscil(0.2, 400)

endin

It is often difficult to find up to date resources that show and explain what is possible with Csound. Documentation and tutorials produced by developers and experienced users tend to be scattered across many different locations. This issue was one of the main motivations for producing this manual; to facilitate a flow between the knowledge of contemporary Csound users and those wishing to learn more about Csound.

More than 15 years after the milestone of Richard Boulanger's Csound Book, the Csound FLOSS Manual is intended to offer an easy-to-understand introduction and to provide a centre of up to date information about the many features of Csound, not as detailed and as in depth as the Csound Book, but including new information and sharing this knowledge with the wider Csound community.

Throughout this manual we will attempt to maintain a balance between providing users with knowledge of most of the important aspects of Csound whilst also remaining concise and simple enough to avoid overwhelming the reader through the shear number of possibilities offered by Csound. Frequently this manual will link to other more detailed resources such as the Canonical Csound Reference Manual, the main support documentation provided by the Csound developers and associated community over the years, and the Csound Journal (edited by James Hearon and Iain McCurdy), a roughly quarterly online publication with many great Csound-related articles.

We hope you enjoy reading this textbook and wish you happy Csounding!

- more commonly known as Pd - see the Pure Data FLOSS Manual for further information^

- See chapter 03I about Functional Syntax^

HOW TO USE THIS MANUAL

The goal of this manual is to provide a readable introduction to Csound. In no way is it meant as a replacement for the Canonical Csound Reference Manual. It is intended as an introduction-tutorial-reference hybrid, gathering together the most important information you will need to work with Csound in a variety of situations. In many places links are provided to other resources such as The Canonical Csound Reference Manual, the Csound Journal, example collections and more.

It is not necessary to read each chapter in sequence, feel free to jump to any chapter that interests you, although bear in mind that occasionally a chapter may make reference to a previous one.

If you are new to Csound, the QUICK START chapter will be the best place to go to help you get started. BASICS provides a general introduction to key concepts about digital sound, vital to understanding how Csound deals with audio. The CSOUND LANGUAGE chapter provides greater detail about how Csound works and how to work with Csound.

SOUND SYNTHESIS introduces various methods of creating sound from scratch and SOUND MODIFICATION describes various methods of transforming sounds that already exist. SAMPLES outlines various ways you can record and playback audio samples in Csound; an area that might be of particular interest to those intent on using Csound as a real-time performance instrument. The MIDI and OPEN SOUND CONTROL chapters focus on different methods of controlling Csound using external software or hardware. The final chapters introduce various front-ends that can be used to interface with the Csound engine and Csound's communication with other applications.

If you would like to know more about a topic, and in particular about the use of any opcode, please refer first to the Canonical Csound Reference Manual.

All files - examples and audio files - can be downloaded at www.csound-tutorial.net. If you use CsoundQt, you can find all the examples in CsoundQt's examples menu under "Floss Manual Examples". When learning Csound (or any other programming language), you may find it beneficial to type the examples out by hand as it will help you to memorise Csound's syntax as well as how to use its opcodes. The more familiar you become with typing out Csound code, the more proficient you will become at implementing your own ideas from low level principles; your focus will shift from the code itself to the musical idea behind the code.

Like other audio tools, Csound can produce an extreme dynamic range (before considering Csound's ability to implement compression and limiting). Be careful when you run the examples! Set the volume on your amplifier low to start with and take special care when using headphones.

You can help to improve this manual either by reporting bugs or by sending requests for new topics or by joining as a writer. Just contact one of the maintainers (see ON THIS RELEASE).

Some issues of this textbook can be ordered as a print-on-demand hard copy at www.lulu.com. Just use Lulu's search utility and look for "Csound".

ON THIS (6th) RELEASE

A year on from the 5th release, this release adds some exciting new sections as well as a number of chapter augmentations and necessary updates. Notable are Michael Gogins' Chapter on running Csound within a browser using HTML5 technology, Victor Lazarrini's and Ed Costello's explanations about Web based Csound, and a new chapter describing the pairing of Csound with the Haskell programming language.

Thanks to all contributors to this release.

What's new in this Release

- Added a section about the necessity of explicit initialization of k-variables for multiple calls of an instrument or UDO in chapter 03A Initialization and Performance Pass (examples 8-10).

- Added a section about the while/until loop in chapter 03C Control Structures.

- Expanded chapter 03D Function Tables, adding descriptions of GEN 08, 16, 19 and 30.

- Small additions in chapter 03E Arrays.

- Some additions and a new section to help using the different opcodes (schedule, event, scoreline etc) in 03F Live Events.

- Added a chapter 03I about Functional Syntax.

- Added examples and descriptions for the powershape and distort opcodes in the chapter 04 Sound Synthesis: Waveshaping.

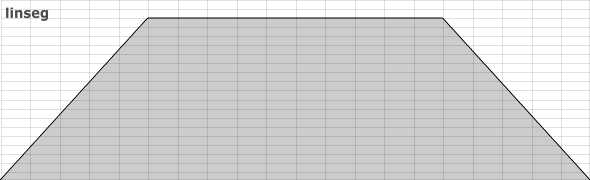

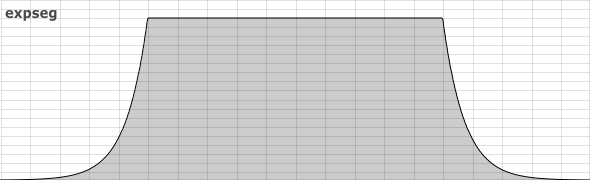

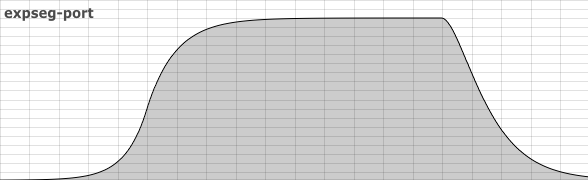

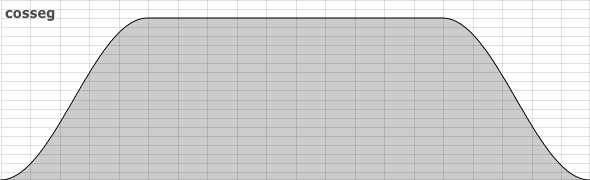

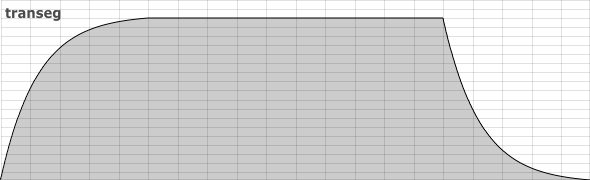

- Expanded chapter 05A Envelopes, principally to incorporate descriptions of transeg and cosseg.

- Added chapter 05L about methods of amplitude and pitch tracking in Csound.

- Added example to illustrate the recording of controller data to the chapter 07C Working with Controllers at the request of Menno Knevel.

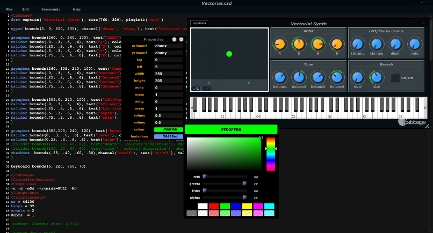

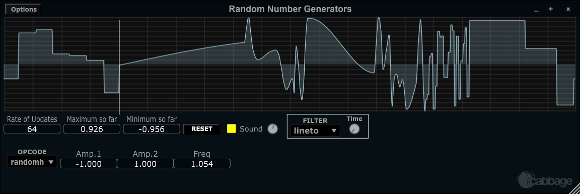

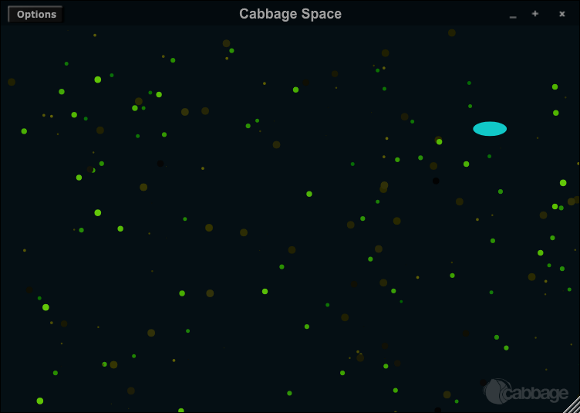

- Chapter 10B Cabbage has been updated and attention drawn to some of its newest features.

- Chapter 10F Web Based Csound has now a description about how to use Csound via UDP and about pNaCl Csound (written by Victor Lazzarini). The section about Csound as a Javascript Library (using Emscripten) in the same chapter has been updated by Ed Costello.

- Refactored chapter 12A about The Csound API for Csound6 and added a section about the use of Foreign Function Interfaces (FFI) (written by François Pinot).

- Added chapter 12G about Csound and Haskell (written by Anton Kholomiov).

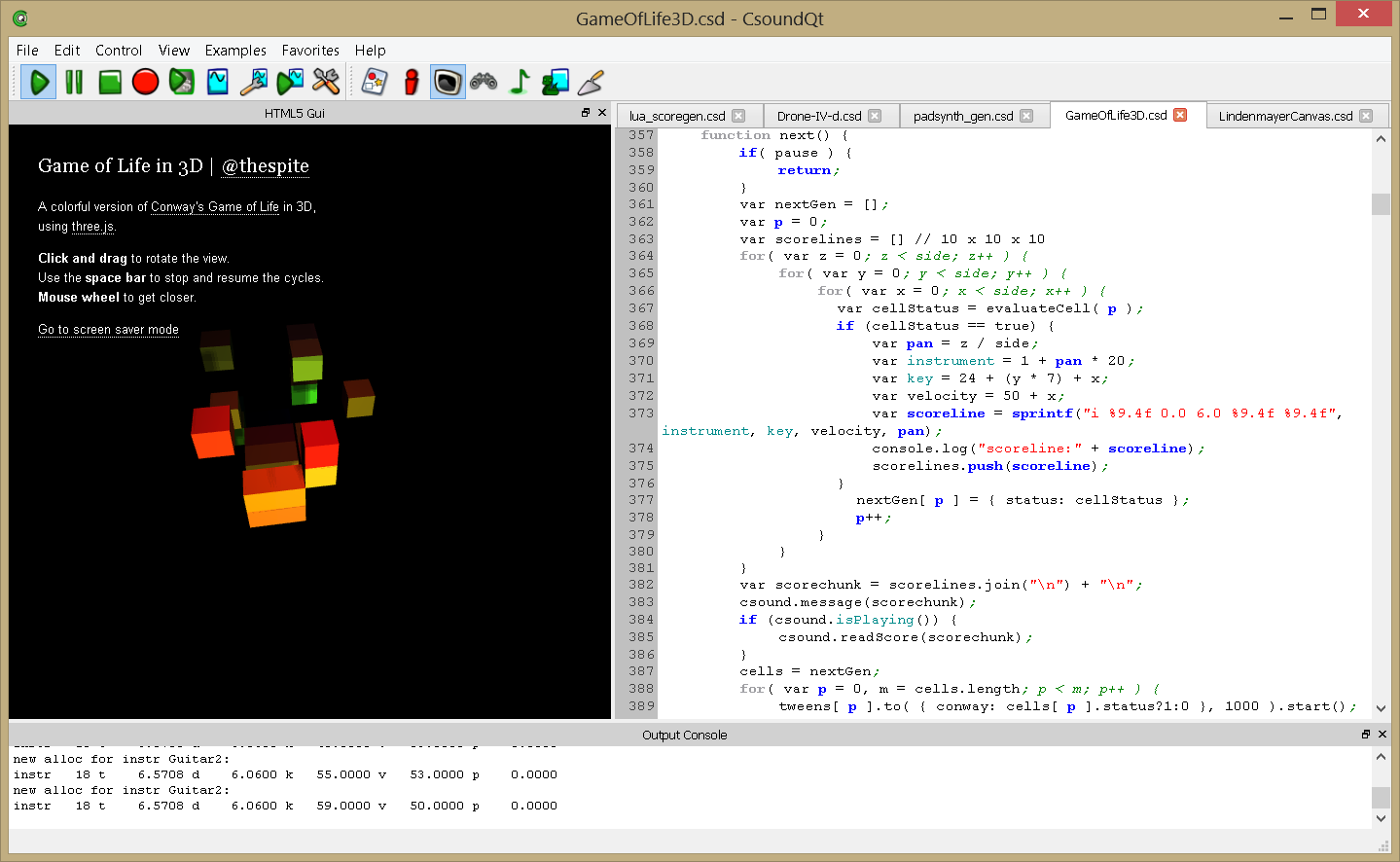

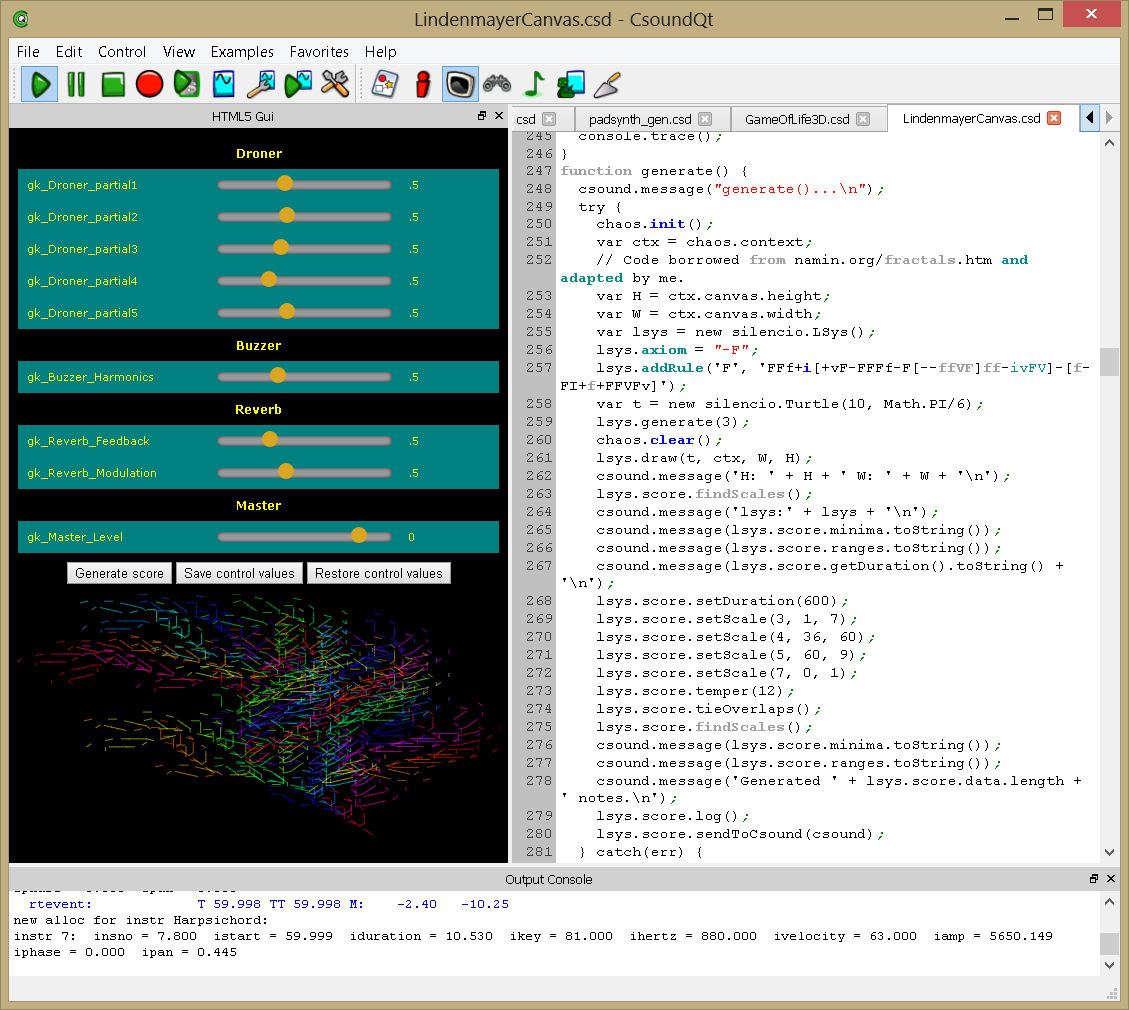

- Added chapter 12H about Csound and HMTL, also explaining the usage of HTML5 Widgets (written by Michael Gogins).

The examples in this book are included in CsoundQt (Examples > FLOSS Manual Examples). Even the examples which require external files should now work out of the box.

If you would like to refer to previous releases, you can find them at http://files.csound-tutorial.net/floss_manual. Also here are all the current csd files and audio samples.

Berlin, March 2015

Iain McCurdy and Joachim Heintz

License

All chapters are copyright of the authors (see below). Unless otherwise stated all chapters in this manual are licensed with GNU General Public License version 2.

This documentation is free documentation; you can redistribute it and/or modify it under the terms of the GNU General Public License as published by the Free Software Foundation; either version 2 of the License, or (at your option) any later version.

This documentation is distributed in the hope that it will be useful, but WITHOUT ANY WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the GNU General Public License for more details.

You should have received a copy of the GNU General Public License along with this documentation; if not, write to the Free Software Foundation, Inc., 51 Franklin Street, Fifth Floor, Boston, MA 02110-1301, USA.

Authors

Note that this book is a collective effort, so some of the contributors may not have been quoted correctly. If you are one of them, please contact us, or simply add your name for credit in the appropriate place.

INTRODUCTION

PREFACE

Joachim Heintz, Andres Cabrera, Alex Hofmann, Iain McCurdy, Alexandre Abrioux

HOW TO USE THIS MANUAL

Joachim Heintz, Andres Cabrera, Iain McCurdy, Alexandre Abrioux

01 BASICS

A. DIGITAL AUDIO

Alex Hofmann, Rory Walsh, Iain McCurdy, Joachim Heintz

B. PITCH AND FREQUENCY

Rory Walsh, Iain McCurdy, Joachim Heintz

C. INTENSITIES

Joachim Heintz

D. RANDOM

Joachim Heintz, Martin Neukom, Iain McCurdy

02 QUICK START

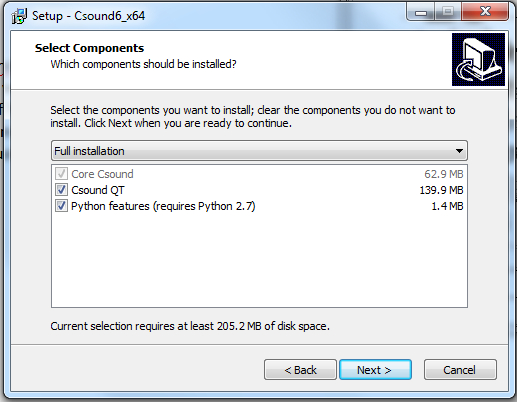

A. MAKE CSOUND RUN

Alex Hofmann, Joachim Heintz, Andres Cabrera, Iain McCurdy, Jim Aikin, Jacques Laplat, Alexandre Abrioux, Menno Knevel

B. CSOUND SYNTAX

Alex Hofmann, Joachim Heintz, Andres Cabrera, Iain McCurdy

C. CONFIGURING MIDI

Andres Cabrera, Joachim Heintz, Iain McCurdy

D. LIVE AUDIO

Alex Hofmann, Andres Cabrera, Iain McCurdy, Joachim Heintz

E. RENDERING TO FILE

Joachim Heintz, Alex Hofmann, Andres Cabrera, Iain McCurdy

03 CSOUND LANGUAGE

A. INITIALIZATION AND PERFORMANCE PASS

Joachim Heintz

B. LOCAL AND GLOBAL VARIABLES

Joachim Heintz, Andres Cabrera, Iain McCurdy

C. CONTROL STRUCTURES

Joachim Heintz

D. FUNCTION TABLES

Joachim Heintz, Iain McCurdy

E. ARRAYS

Joachim Heintz

F. LIVE CSOUND

Joachim Heintz, Iain McCurdy

G. USER DEFINED OPCODES

Joachim Heintz

H. MACROS

Iain McCurdy

I. FUNCTIONAL SYNTAX

Joachim Heintz

04 SOUND SYNTHESIS

A. ADDITIVE SYNTHESIS

Andres Cabrera, Joachim Heintz, Bjorn Houdorf

B. SUBTRACTIVE SYNTHESIS

Iain McCurdy

C. AMPLITUDE AND RINGMODULATION

Alex Hofman

D. FREQUENCY MODULATION

Alex Hofmann, Bjorn Houdorf

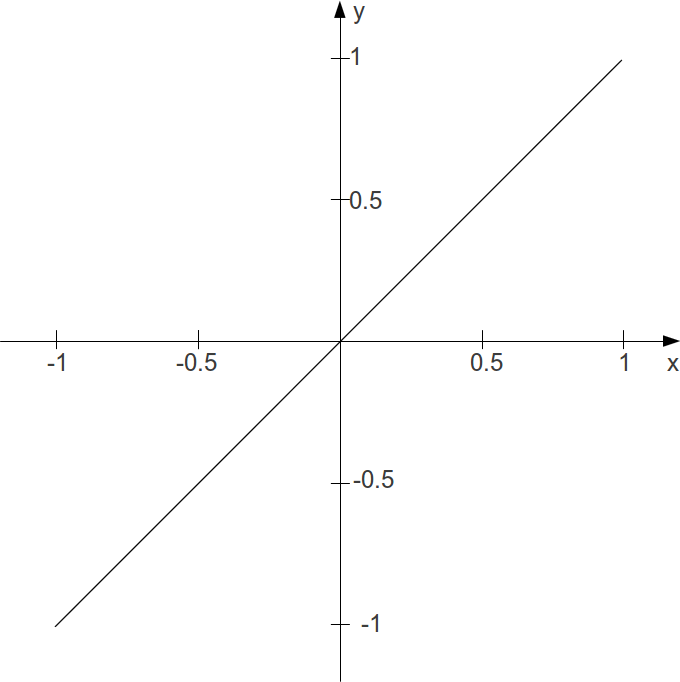

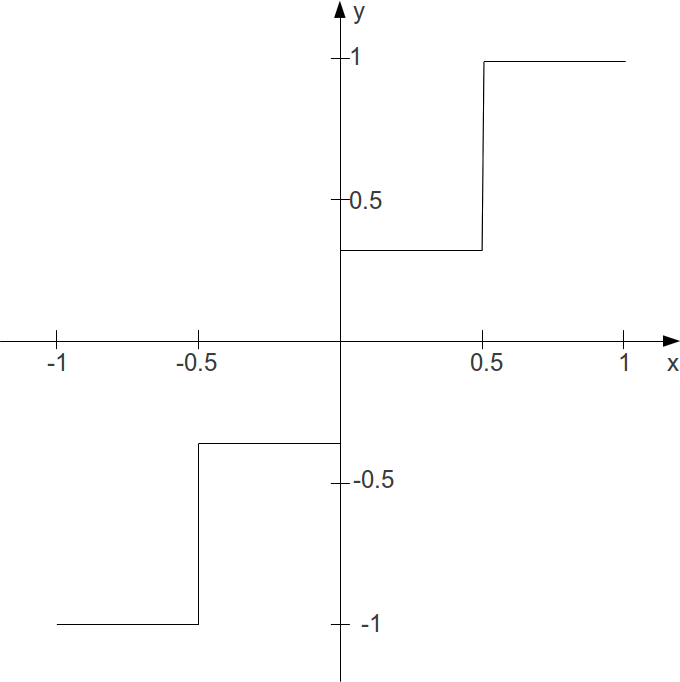

E. WAVESHAPING

Joachim Heintz, Iain McCurdy

F. GRANULAR SYNTHESIS

Iain McCurdy

G. PHYSICAL MODELLING

Joachim Heintz, Iain McCurdy, Martin Neukom

H. SCANNED SYNTHESIS

Christopher Saunders

05 SOUND MODIFICATION

A. ENVELOPES

Iain McCurdy

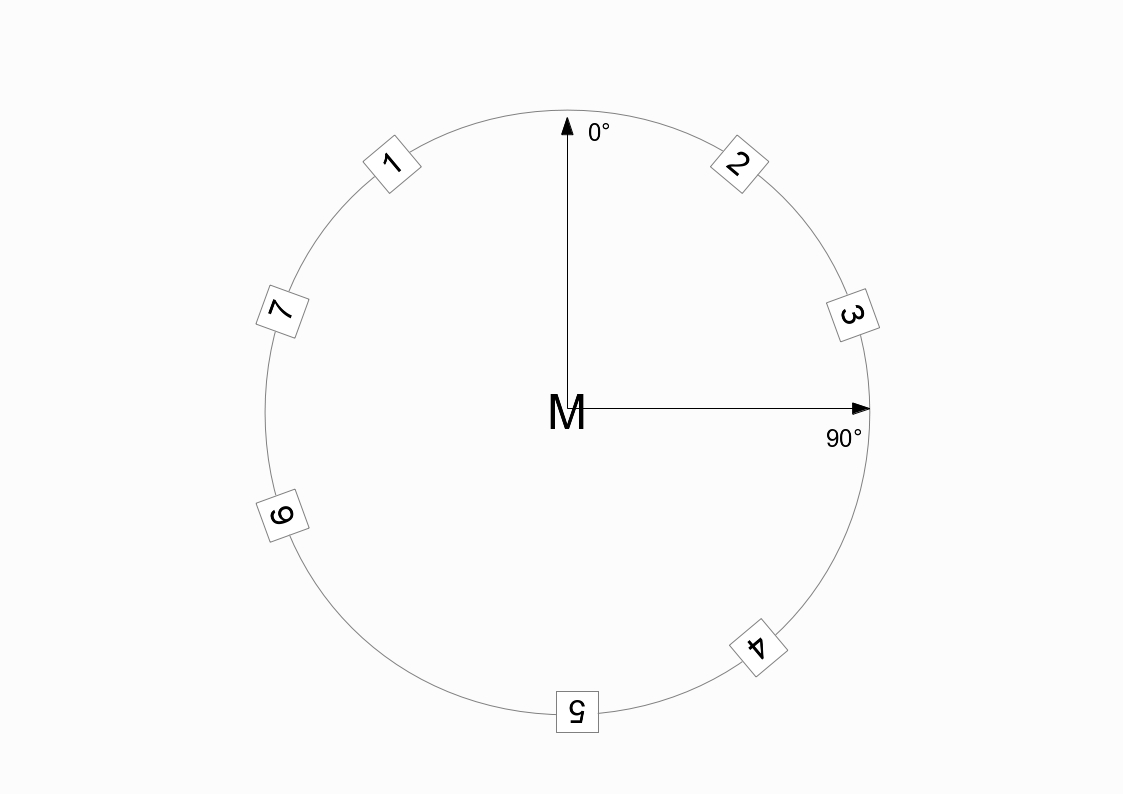

B. PANNING AND SPATIALIZATION

Iain McCurdy, Joachim Heintz

C. FILTERS

Iain McCurdy

D. DELAY AND FEEDBACK

Iain McCurdy

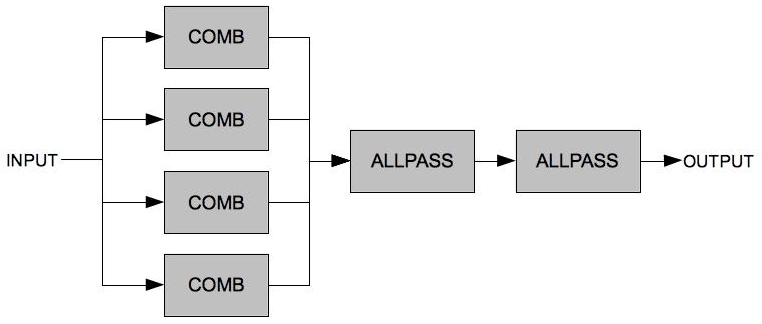

E. REVERBERATION

Iain McCurdy

F. AM / RM / WAVESHAPING

Alex Hofmann, Joachim Heintz

G. GRANULAR SYNTHESIS

Iain McCurdy, Oeyvind Brandtsegg, Bjorn Houdorf

H. CONVOLUTION

Iain McCurdy

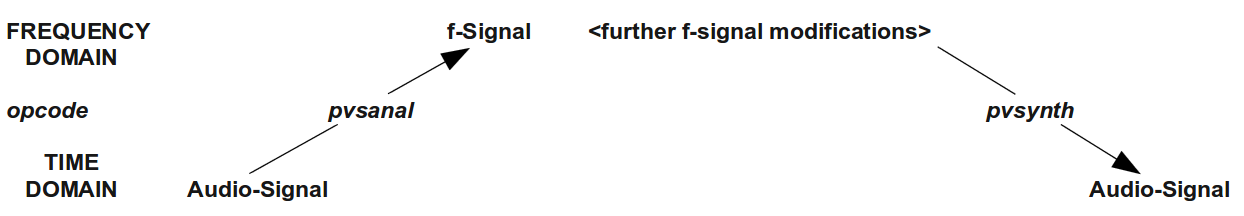

I. FOURIER ANALYSIS / SPECTRAL PROCESSING

Joachim Heintz

K. ANALYSIS TRANSFORMATION SYNTHESIS

Oscar Pablo di Liscia

L. AMPLITUDE AND PITCH TRACKING

Iain McCurdy

06 SAMPLES

A. RECORD AND PLAY SOUNDFILES

Iain McCurdy, Joachim Heintz

B. RECORD AND PLAY BUFFERS

Iain McCurdy, Joachim Heintz, Andres Cabrera

07 MIDI

A. RECEIVING EVENTS BY MIDIIN

Iain McCurdy

B. TRIGGERING INSTRUMENT INSTANCES

Joachim Heintz, Iain McCurdy

C. WORKING WITH CONTROLLERS

Iain McCurdy

D. READING MIDI FILES

Iain McCurdy

E. MIDI OUTPUT

Iain McCurdy

08 OTHER COMMUNICATION

A. OPEN SOUND CONTROL

Alex Hofmann

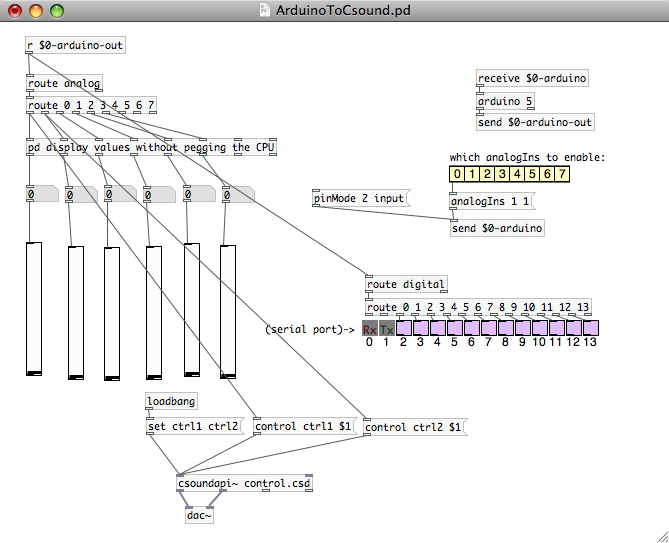

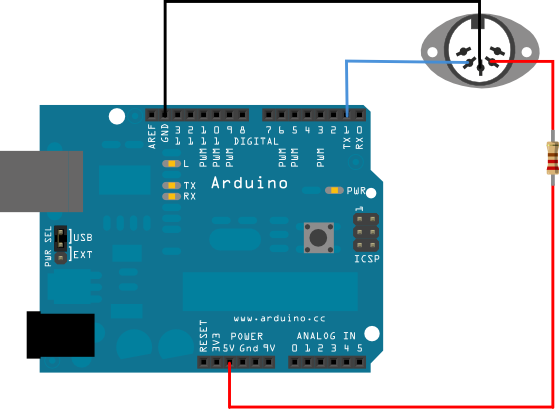

B. CSOUND AND ARDUINO

Iain McCurdy

09 CSOUND IN OTHER APPLICATIONS

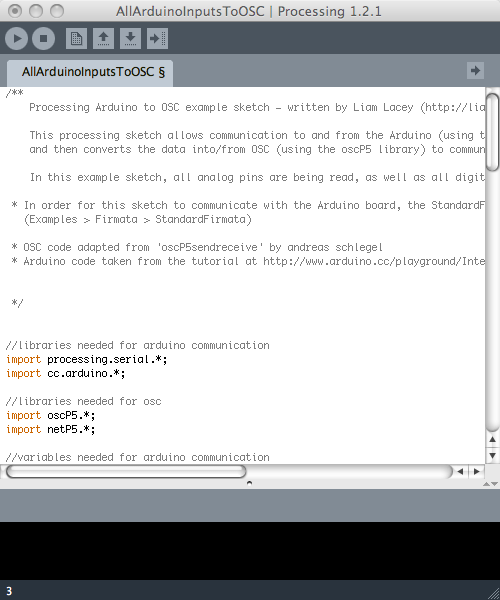

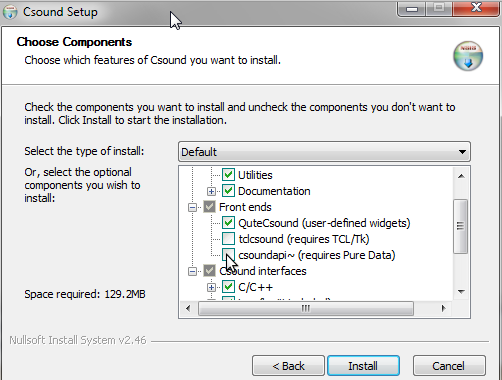

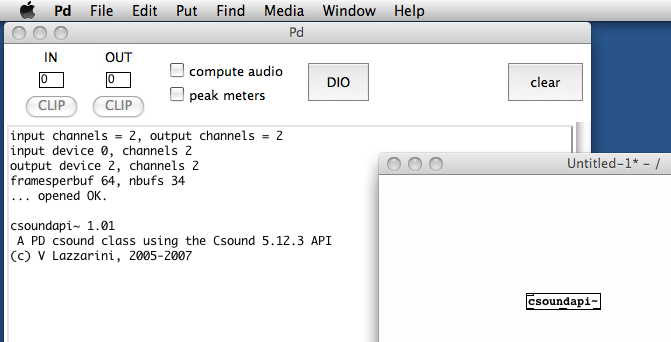

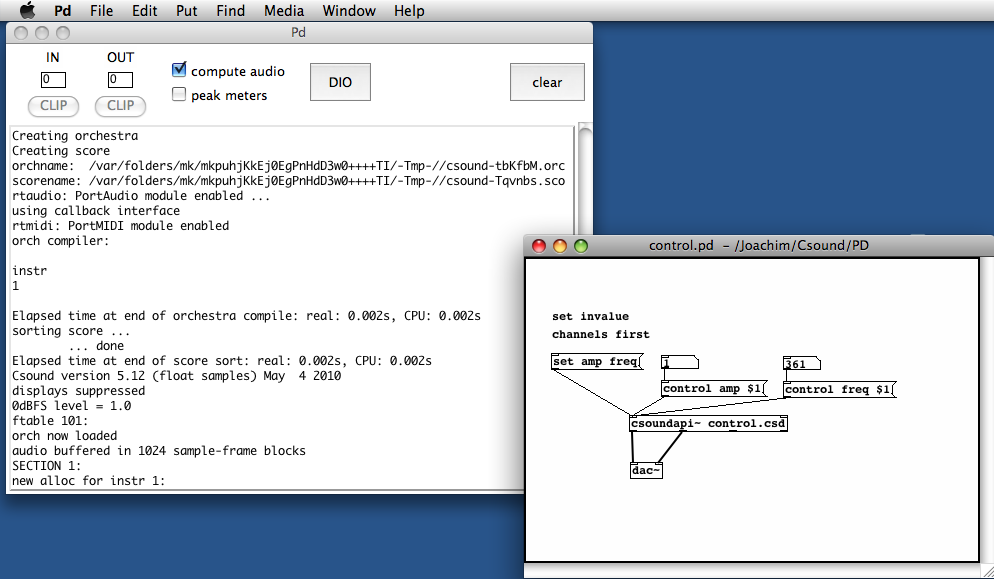

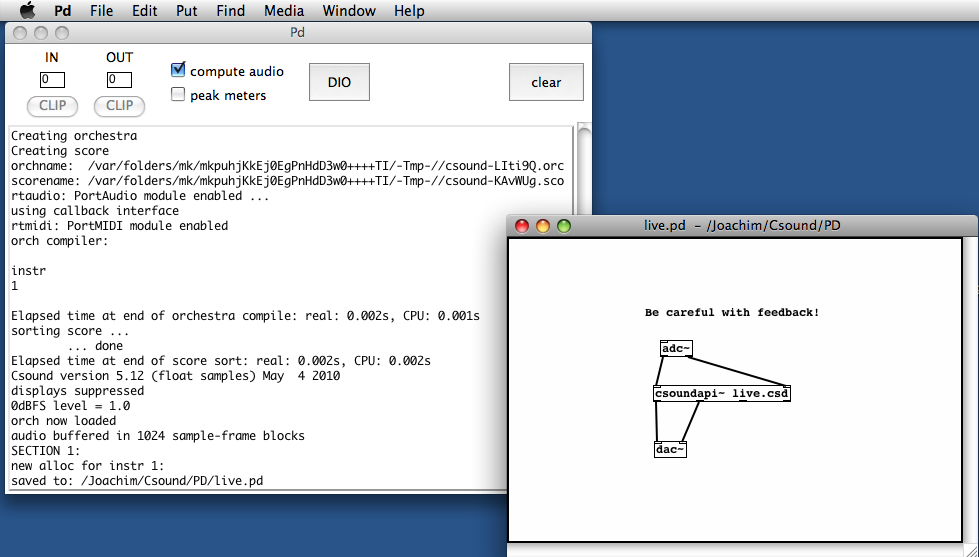

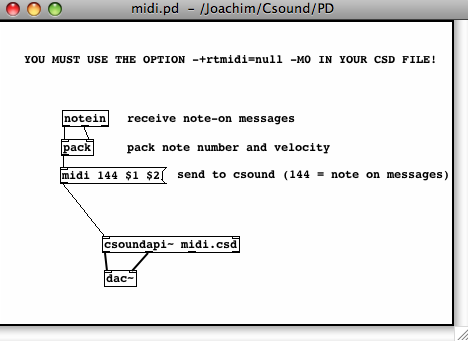

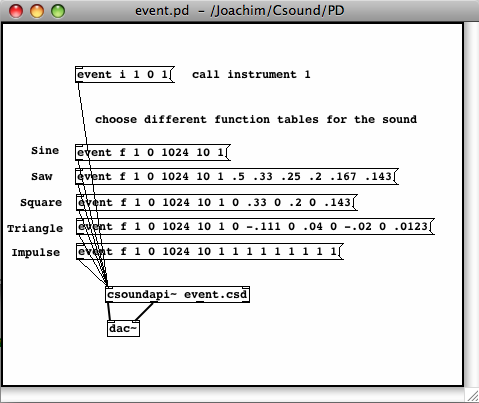

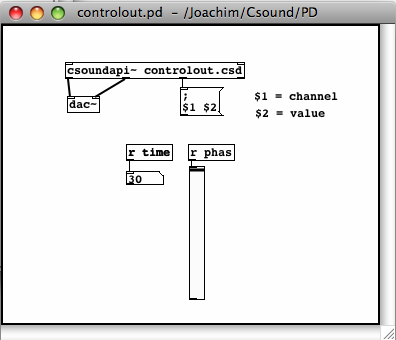

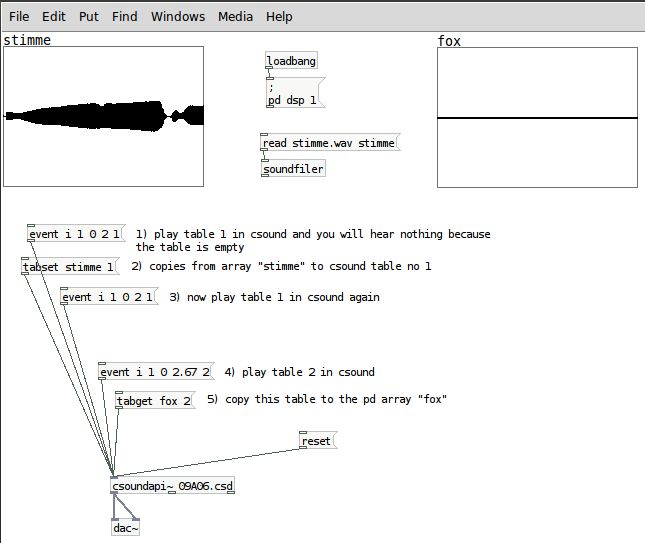

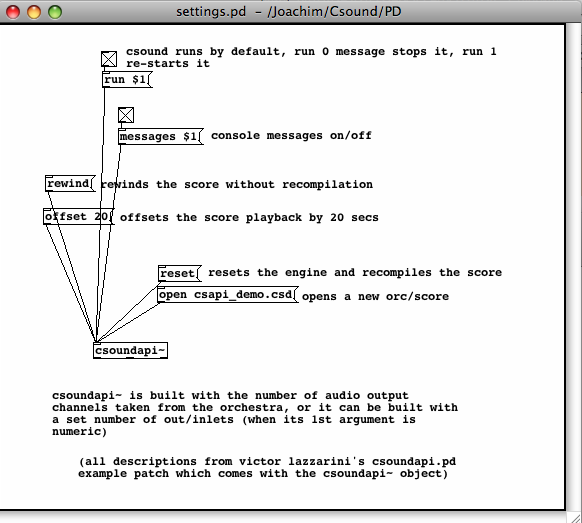

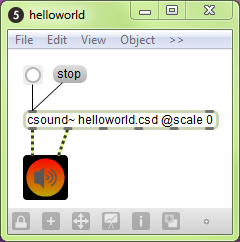

A. CSOUND IN PD

Joachim Heintz, Jim Aikin

B. CSOUND IN MAXMSP

Davis Pyon

C. CSOUND IN ABLETON LIVE

Rory Walsh

D. CSOUND AS A VST PLUGIN

Rory Walsh

10 CSOUND FRONTENDS

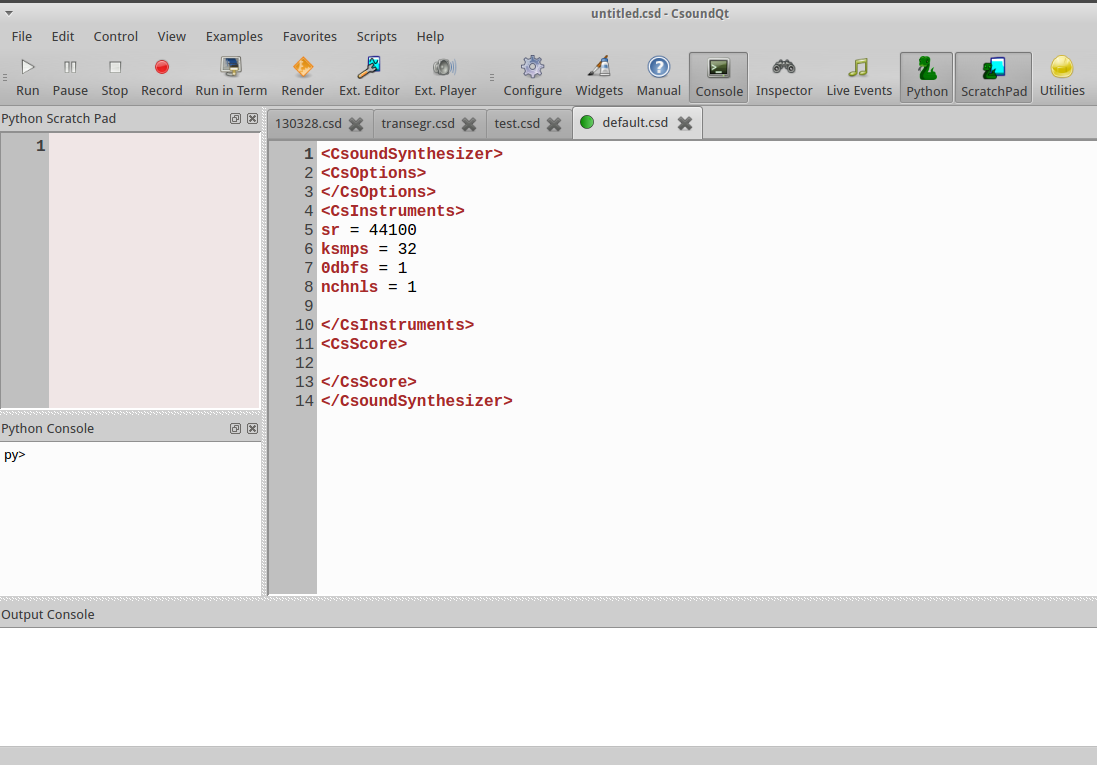

CSOUNDQT

Andrés Cabrera, Joachim Heintz, Peiman Khosravi

CABBAGE

Rory Walsh, Menno Knevel, Iain McCurdy

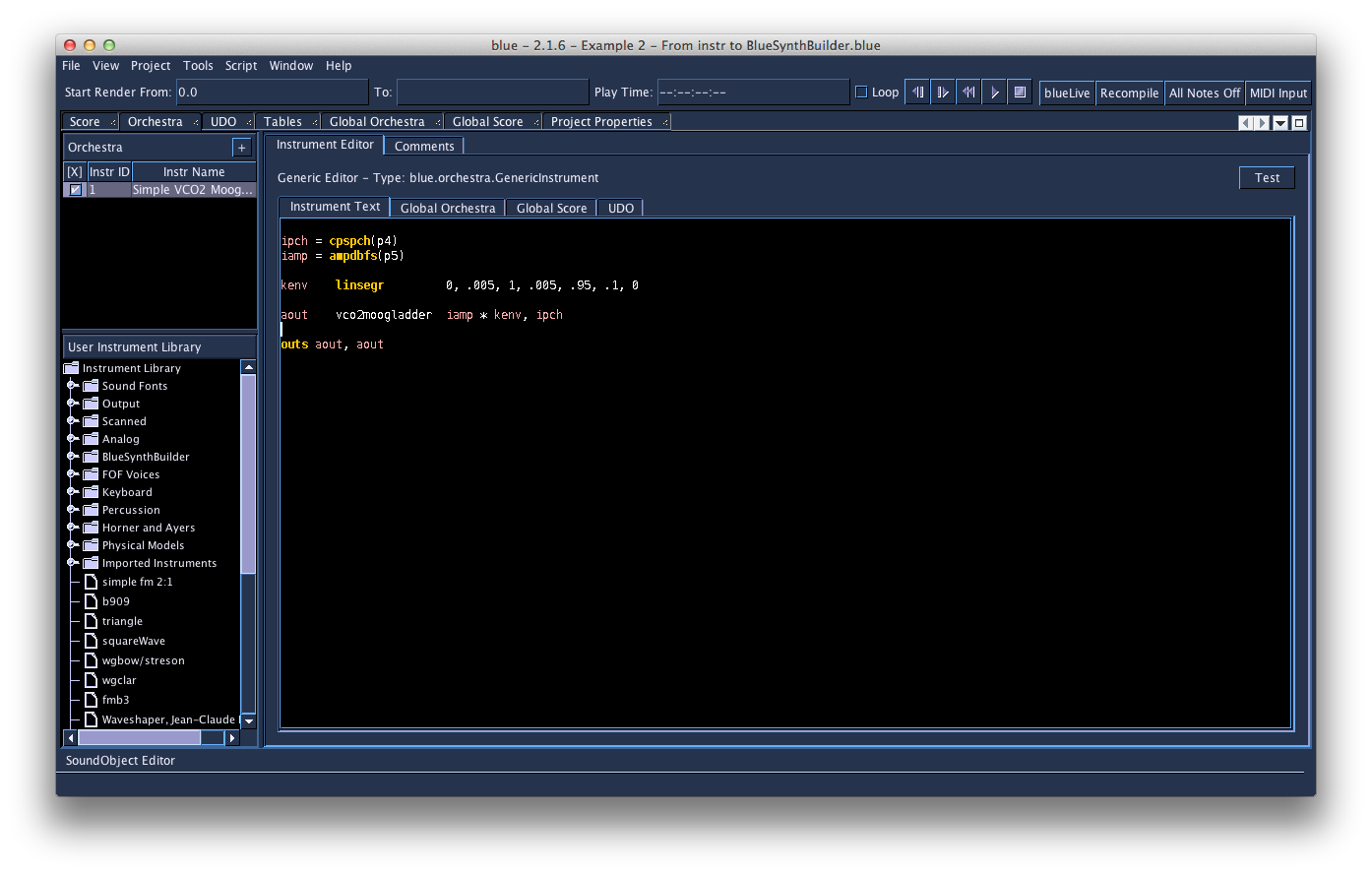

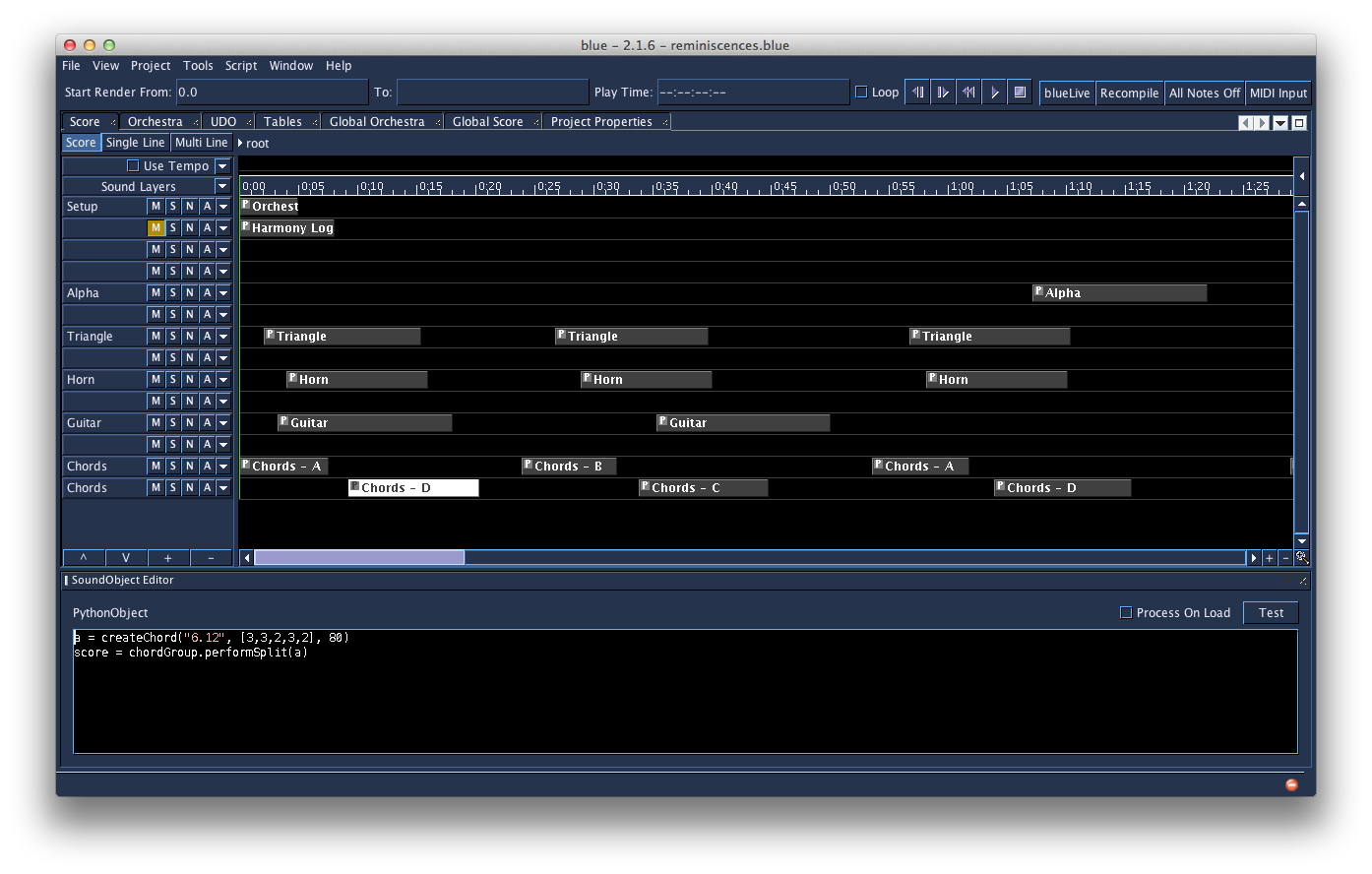

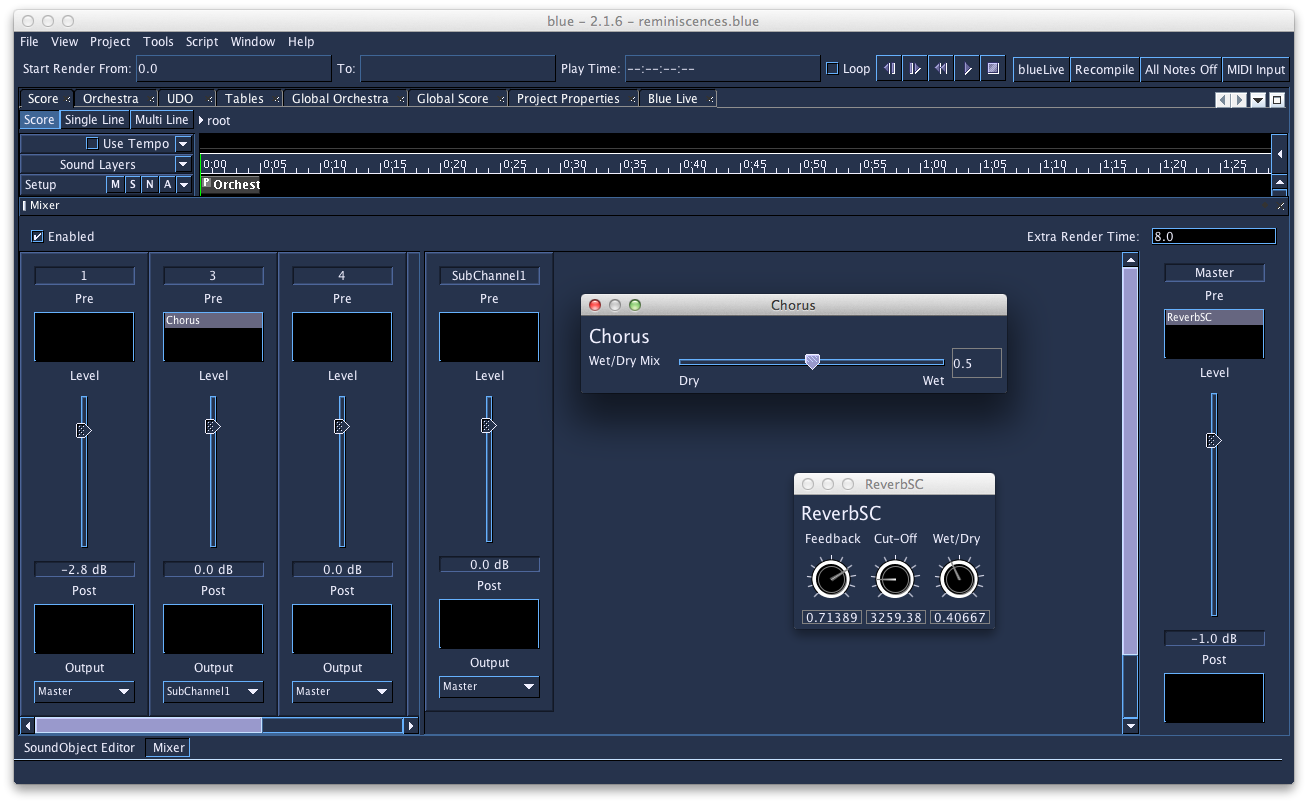

BLUE

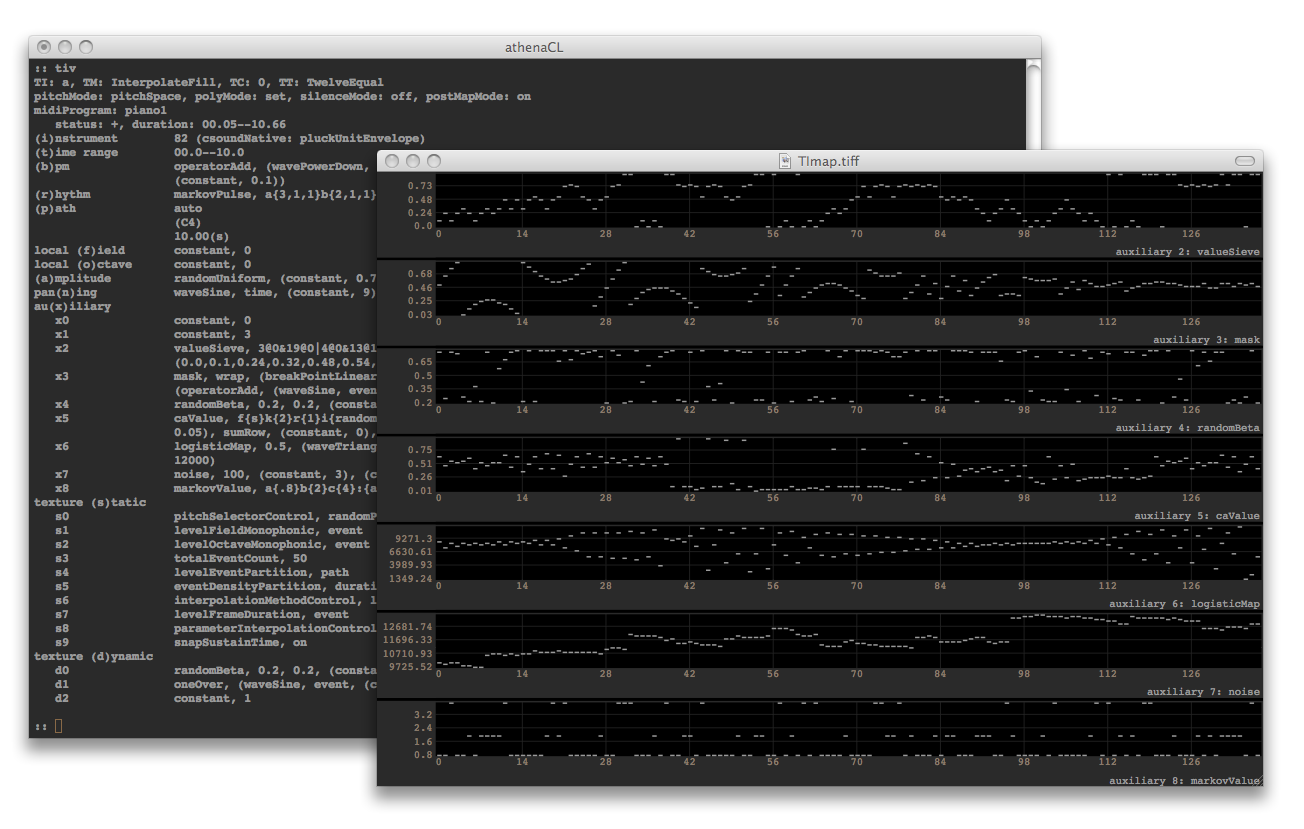

Steven Yi, Jan Jacob Hofmann

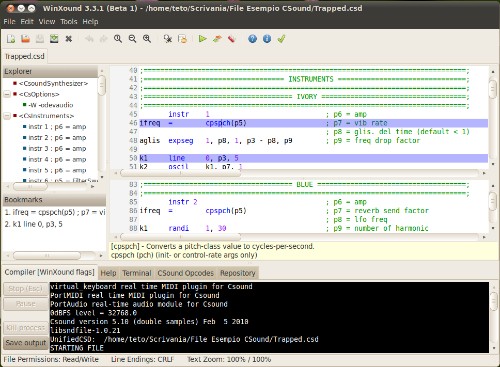

WINXOUND

Stefano Bonetti, Menno Knevel

CSOUND VIA TERMINAL

Iain McCurdy

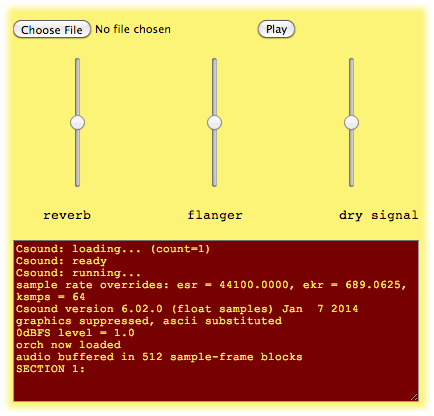

WEB BASED CSOUND

Victor Lazzarini, Iain McCurdy, Ed Costello

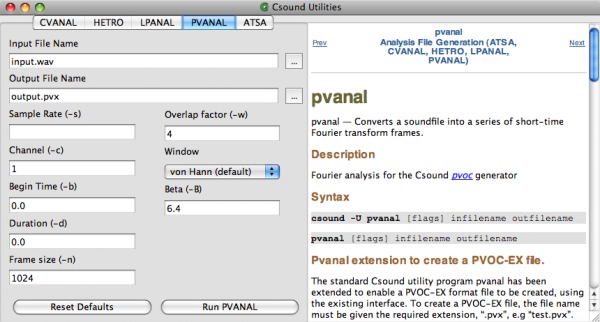

11 CSOUND UTILITIES

CSOUND UTILITIES

Iain McCurdy

12 CSOUND AND OTHER PROGRAMMING LANGUAGES

A. THE CSOUND API

François Pinot, Rory Walsh

B. PYTHON INSIDE CSOUND

Andrés Cabrera, Joachim Heintz, Jim Aikin

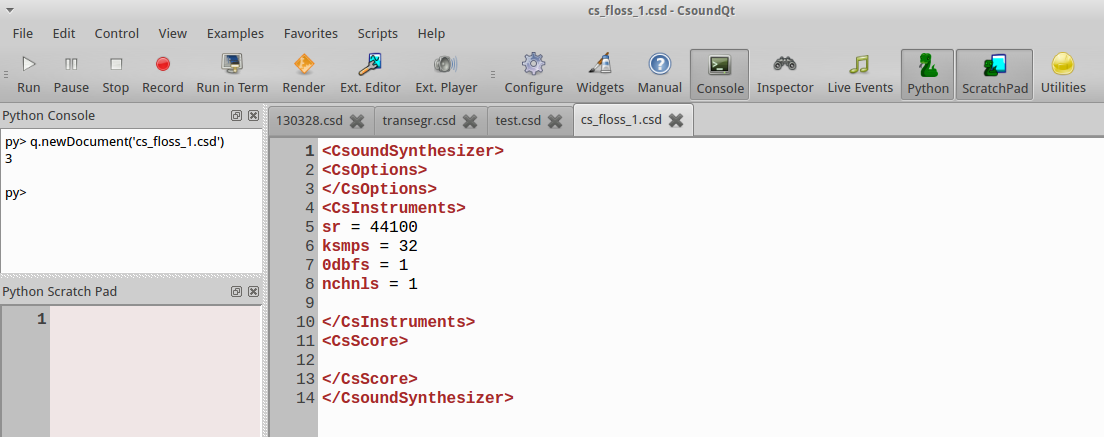

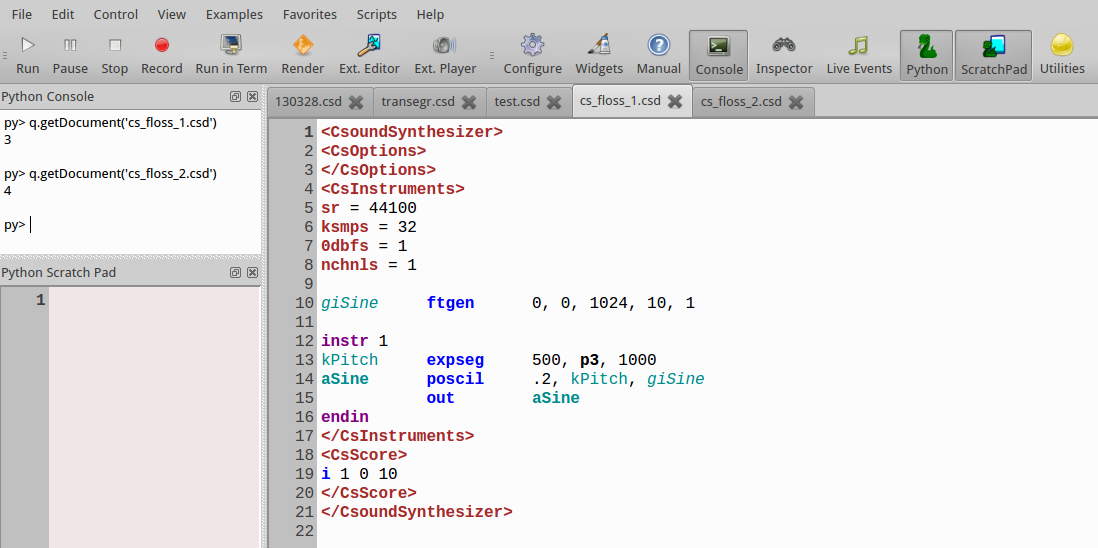

C. PYTHON IN CSOUNDQT

Tarmo Johannes, Joachim Heintz

D. LUA IN CSOUND

E. CSOUND IN IOS

Nicholas Arner, Nikhil Singh, Richard Boulanger

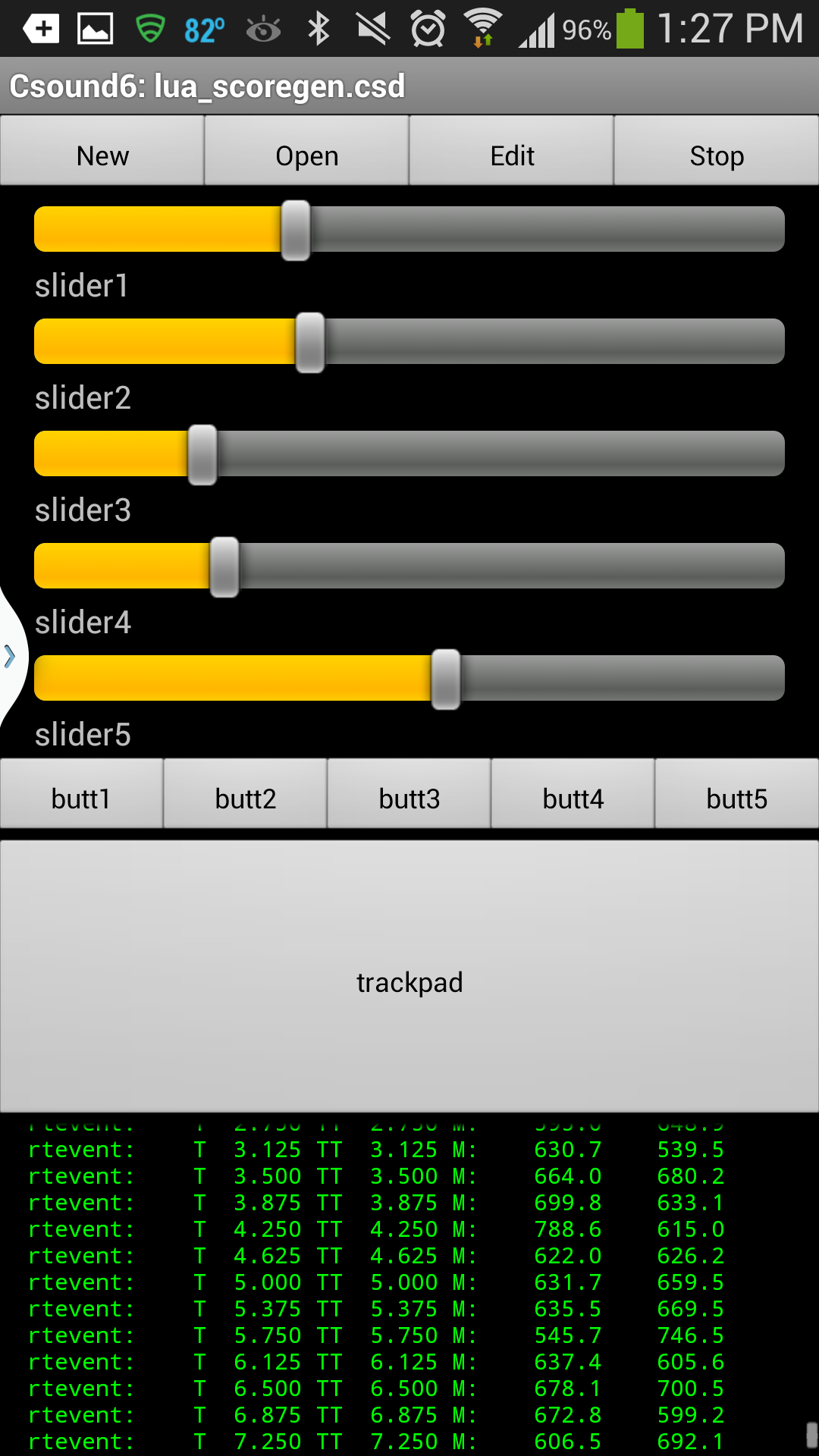

F. CSOUND ON ANDROID

Michael Gogins

G. CSOUND AND HASKELL

Anton Kholomiov

H. CSOUND AND HTML

Michael Gogins

13 EXTENDING CSOUND

EXTENDING CSOUND

Victor Lazzarini

OPCODE GUIDE

OVERVIEW

Joachim Heintz, Iain McCurdy

SIGNAL PROCESSING I

Joachim Heintz, Iain McCurdy

SIGNAL PROCESSING II

Joachim Heintz, Iain McCurdy

DATA

Joachim Heintz, Iain McCurdy

REALTIME INTERACTION

Joachim Heintz, Iain McCurdy

INSTRUMENT CONTROL

Joachim Heintz, Iain McCurdy

MATH, PYTHON/SYSTEM, PLUGINS

Joachim Heintz, Iain McCurdy

APPENDIX

GLOSSARY

Joachim Heintz, Iain McCurdy

LINKS

Joachim Heintz, Stefano Bonetti

METHODS OF WRITING CSOUND SCORES

Iain McCurdy, Joachim Heintz, Jacob Joaquin, Menno Knevel

V.1 - Final Editing Team in March 2011:

Joachim Heintz, Alex Hofmann, Iain McCurdy

V.2 - Final Editing Team in March 2012:

Joachim Heintz, Iain McCurdy

V.3 - Final Editing Team in March 2013:

Joachim Heintz, Iain McCurdy

V.4 - Final Editing Team in September 2013:

Joachim Heintz, Alexandre Abrioux

V.5 - Final Editing Team in March 2014:

Joachim Heintz, Iain McCurdy

V.6 - Final Editing Team March-June 2015:

Joachim Heintz, Iain McCurdy

Free manuals for free software

01 BASICS

DIGITAL AUDIO

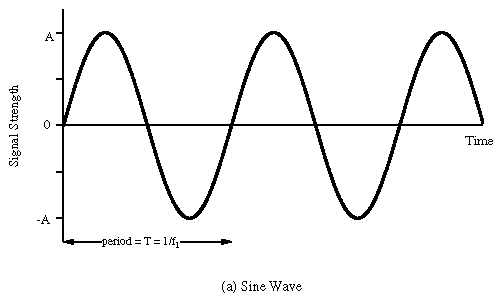

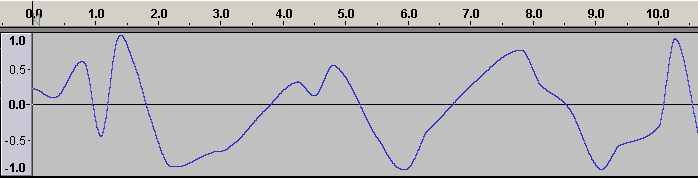

At a purely physical level, sound is simply a mechanical disturbance of a medium. The medium in question may be air, solid, liquid, gas or a combination of several of these. This disturbance in the medium causes molecules to move back and forth in a spring-like manner. As one molecule hits the next, the disturbance moves through the medium causing sound to travel. These so called compressions and rarefactions in the medium can be described as sound waves. The simplest type of waveform, describing what is referred to as 'simple harmonic motion', is a sine wave.

Each time the waveform signal goes above 0 the molecules are in a state of compression meaning that each molecule within the waveform disturbance is pushing into its neighbour. Each time the waveform signal drops below 0 the molecules are in a state of rarefaction meaning the molecules are pulling away from thier neighbours. When a waveform shows a clear repeating pattern, as in the case above, it is said to be periodic. Periodic sounds give rise to the sensation of pitch.

Elements of a Sound Wave

Periodic waves have four common parameters, and each of the four parameters affects the way we perceive sound.

-

Period: This is the length of time it takes for a waveform to complete one cycle. This amount of time is referred to as t

-

Wavelength: the distance it takes for a wave to complete one full period. This is usually measured in meters.

-

Frequency: the number of cycles or periods per second. Frequency is measured in Hertz. If a sound has a frequency of 440Hz it completes 440 cycles every second. Given a frequency, one can easily calculate the period of any sound. Mathematically, the period is the reciprocal of the frequency (and vice versa). In equation form this is expressed as follows.

Frequency = 1/Period Period = 1/Frequency

Therefore the frequency is the inverse of the period, so a wave of 100 Hz frequency has a period of 1/100 or 0.01 secs, likewise a frequency of 256Hz has a period of 1/256, or 0.004 secs. To calculate the wavelength of a sound in any given medium we can use the following equation:

Wavelength = Velocity/Frequency

Humans can hear frequencies from 20Hz to 20000Hz (although this can differ dramatically from individual to individual and the upper limit will decay with age). You can read more about frequency in the next chapter.

-

Phase: This is the starting point of a waveform. The starting point along the Y-axis of our plotted waveform is not always zero. This can be expressed in degrees or in radians. A complete cycle of a waveform will cover 360 degrees or (2 x pi) radians.

-

Amplitude: Amplitude is represented by the y-axis of a plotted pressure wave. The strength at which the molecules pull or push away from each other, which will also depend upon the resistance offered by the medium, will determine how far above and below zero - the point of equilibrium - the wave fluctuates. The greater the y-value the greater the amplitude of our wave. The greater the compressions and rarefactions, the greater the amplitude.

Transduction

The analogue sound waves we hear in the world around us need to be converted into an electrical signal in order to be amplified or sent to a soundcard for recording. The process of converting acoustical energy in the form of pressure waves into an electrical signal is carried out by a device known as a a transducer.

A transducer, which is usually found in microphones, produces a changing electrical voltage that mirrors the changing compression and rarefaction of the air molecules caused by the sound wave. The continuous variation of pressure is therefore 'transduced' into continuous variation of voltage. The greater the variation of pressure the greater the variation of voltage that is sent to the computer.

Ideally the transduction process should be as transparent as possible: whatever goes in should come out as a perfect analogue in a voltage representation. In reality however this will not be the case, noise and distortion are always incorporated into the signal. Every time sound passes through a transducer or is transmitted electrically a change in signal quality will result. When we talk of 'noise' we are talking specifically about any unwanted signal captured during the transduction process. This normally manifests itself as an unwanted 'hiss'.

Sampling

The analogue voltage that corresponds to an acoustic signal changes continuously so that at each instant in time it will have a different value. It is not possible for a computer to receive the value of the voltage for every instant because of the physical limitations of both the computer and the data converters (remember also that there are an infinite number of instances between every two instances!).

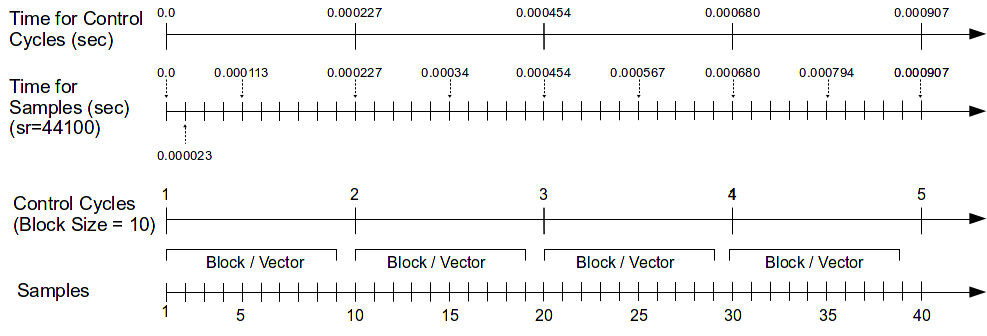

What the soundcard can do, however, is to measure the power of the analogue voltage at intervals of equal duration. This is how all digital recording works and this is known as 'sampling'. The result of this sampling process is a discrete, or digital, signal which is no more than a sequence of numbers corresponding to the voltage at each successive moment of sampling.

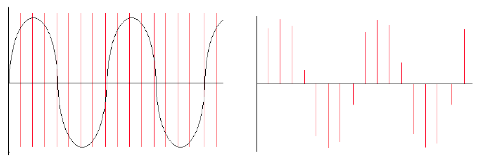

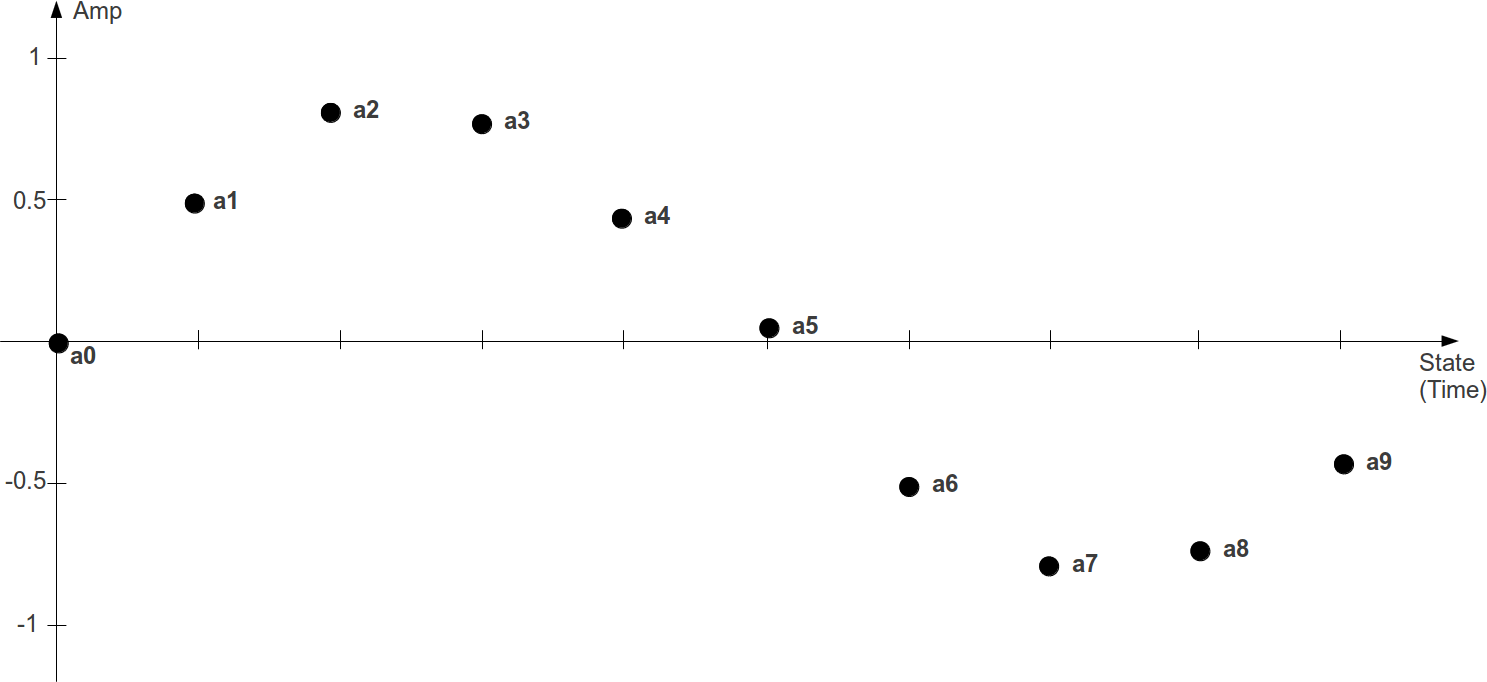

Below left is a diagram showing a sinusoidal waveform. The vertical lines that run through the diagram represents the points in time when a snapshot is taken of the signal. After the sampling has taken place we are left with what is known as a discrete signal consisting of a collection of audio samples, as illustrated in the diagram on the right hand side below. If one is recording using a typical audio editor the incoming samples will be stored in the computer's RAM (Random Access Memory). In Csound one can process the incoming audio samples in real time and output a new stream of samples or write them to disk in the form of a sound file.

It is important to remember that each sample represents the amount of voltage, positive or negative, that was present in the signal at the point in time at which the sample or snapshot was taken.

The same principle applies to recording of live video: a video camera takes a sequence of pictures of motion and most video cameras will take between 30 and 60 still pictures a second. Each picture is called a frame and when these frames are played in sequence at a rate corresponding to that at which they were taken we no longer perceive them as individual pictures, we perceive them instead as a continuous moving image.

Analogue versus Digital

In general, analogue systems can be quite unreliable when it comes to noise and distortion. Each time something is copied or transmitted some noise and distortion is introduced into the process. If this is repeated many times, the cumulative effect can deteriorate a signal quite considerably. It is for this reason that the music industry has almost entirely turned to digital technology. One particular advantage of storing a signal digitally is that once the changing signal has been converted to a discrete series of values, it can effectively be 'cloned' an clones can be made of that clone with no loss or distortion of data. Mathematical routines can be applied to prevent errors in transmission, which could otherwise introduce noise into the signal.

Sample Rate and the Sampling Theorem

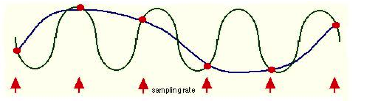

The sample rate describes the number of samples (pictures/snapshots) taken each second. To sample an audio signal correctly it is important to pay attention to the sampling theorem:

"To represent digitally a signal containing frequencies up to X Hz, it is necessary to use a sampling rate of at least 2X samples per second"

According to this theorem, a soundcard or any other digital recording device will not be able to represent any frequency above 1/2 the sampling rate. Half the sampling rate is also referred to as the Nyquist frequency, after the Swedish physicist Harry Nyquist who formalized the theory in the 1920s. What it all means is that any signal with frequencies above the Nyquist frequency will be misrepresented and will actually produce a frequency lower than the one being sampled. When this happens it results in what is known as 'aliasing' or 'foldover'.

Aliasing

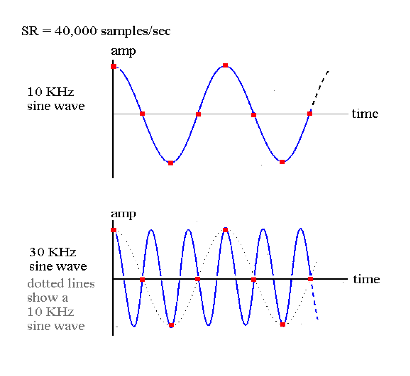

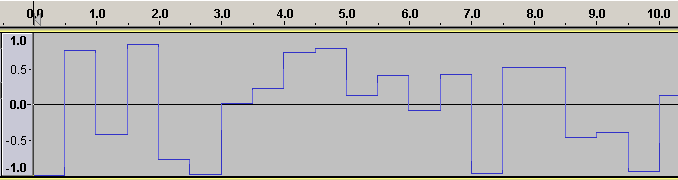

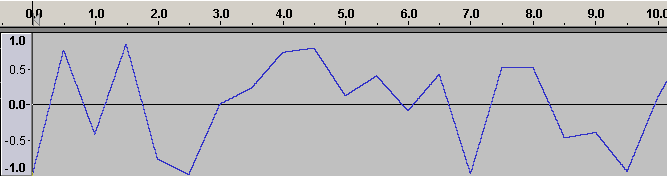

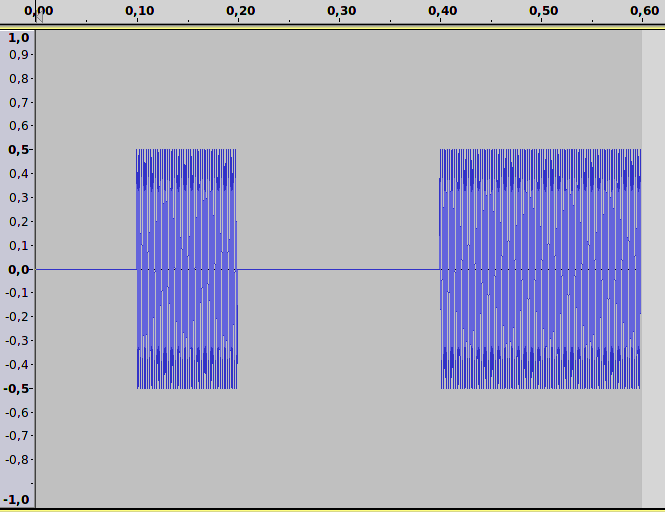

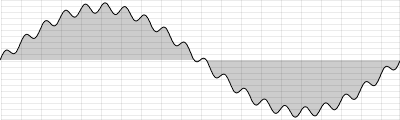

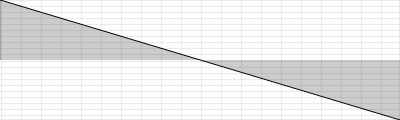

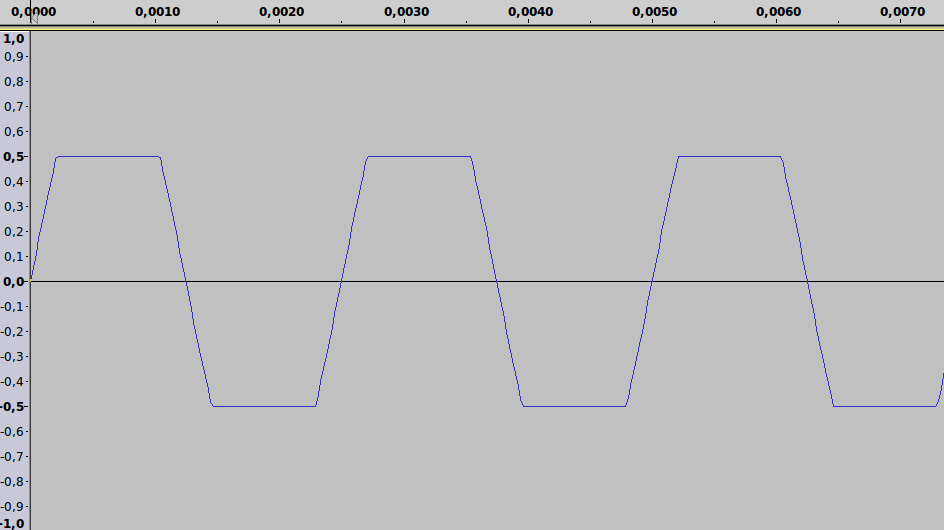

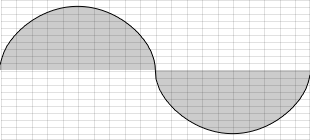

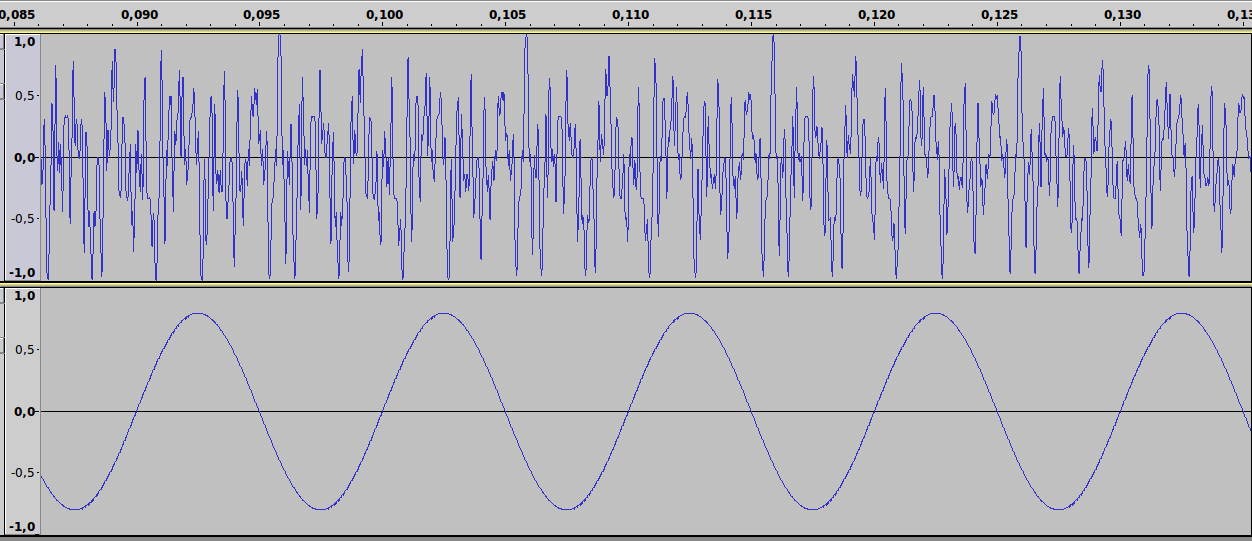

Here is a graphical representation of aliasing.

The sinusoidal waveform in blue is being sampled where arrows are indicated along the timeline. The line that joins the red circles together is the captured waveform. As you can see, the captured waveform and the original waveform express different frequencies.

Here is another example:

We can see that if the sample rate is 40,000 there is no problem with sampling a signal that is 10KHz. On the other hand, in the second example it can be seen that a 30kHz waveform is not going to be correctly sampled. In fact we end up with a waveform that is 10kHz, rather than 30kHz. This may seem like an academic proposition in that we will never be able to hear a 30KHz waveform anyway but some synthesis and DSP techniques procedures will produce these frequencies as unavoidable by-products and we need to ensure that they do not result in unwanted artifacts.

The following Csound instrument plays a 1000 Hz tone first directly, and then because the frequency is 1000 Hz lower than the sample rate of 44100 Hz:

EXAMPLE 01A01_Aliasing.csd

<CsoundSynthesizer>

<CsOptions>

-odac

</CsOptions>

<CsInstruments>

;example by Joachim Heintz

sr = 44100

ksmps = 32

nchnls = 2

0dbfs = 1

instr 1

asig oscils .2, p4, 0

outs asig, asig

endin

</CsInstruments>

<CsScore>

i 1 0 2 1000 ;1000 Hz tone

i 1 3 2 43100 ;43100 Hz tone sounds like 1000 Hz because of aliasing

</CsScore>

</CsoundSynthesizer>

The same phenomenon takes places in film and video too. You may recall having seen wagon wheels apparently turn at the wrong speed in old Westerns. Let us say for example that a camera is taking 60 frames per second of a wheel moving. In one example, if the wheel is completing one rotation in exactly 1/60th of a second, then every picture looks the same and as a result the wheel appears to be motionless. If the wheel speeds up, i.e. it increases its rotational frequency, it will appear as if the wheel is slowly turning backwards. This is because the wheel will complete more than a full rotation between each snapshot.

As an aside, it is worth observing that a lot of modern 'glitch' music intentionally makes a feature of the spectral distortion that aliasing induces in digital audio. Csound is perfectly capable of imitating the effects of aliasing while being run at any sample rate - if that is what you desire.

Audio-CD Quality uses a sample rate of 44100Kz (44.1 kHz). This means that CD quality can only represent frequencies up to 22050Hz. Humans typically have an absolute upper limit of hearing of about 20Khz thus making 44.1KHz a reasonable standard sampling rate.

Bits, Bytes and Words. Understanding Binary.

All digital computers represent data as a collection of bits (short for binary digit). A bit is the smallest possible unit of information. One bit can only be in one of two states - off or on, 0 or 1. The meaning of the bit - which can represent almost anything - is unimportant at this point, the thing to remember is that all computer data - a text file on disk, a program in memory, a packet on a network - is ultimately a collection of bits.

Bits in groups of eight are called bytes, and one byte usually represents a single character of data in the computer. It's a little used term, but you might be interested in knowing that a nibble is half a byte (usually 4 bits).

The Binary System

All digital computers work in a environment that has only two variables, 0 and 1. All numbers in our decimal system therefore must be translated into 0's and 1's in the binary system. If you think of binary numbers in terms of switches. With one switch you can represent up to two different numbers.

0 (OFF) = Decimal 0

1 (ON) = Decimal 1

Thus, a single bit represents 2 numbers, two bits can represent 4 numbers, three bits represent 8 numbers, four bits represent 16 numbers, and so on up to a byte, or eight bits, which represents 256 numbers. Therefore each added bit doubles the amount of possible numbers that can be represented. Put simply, the more bits you have at your disposal the more information you can store.

Bit-depth Resolution

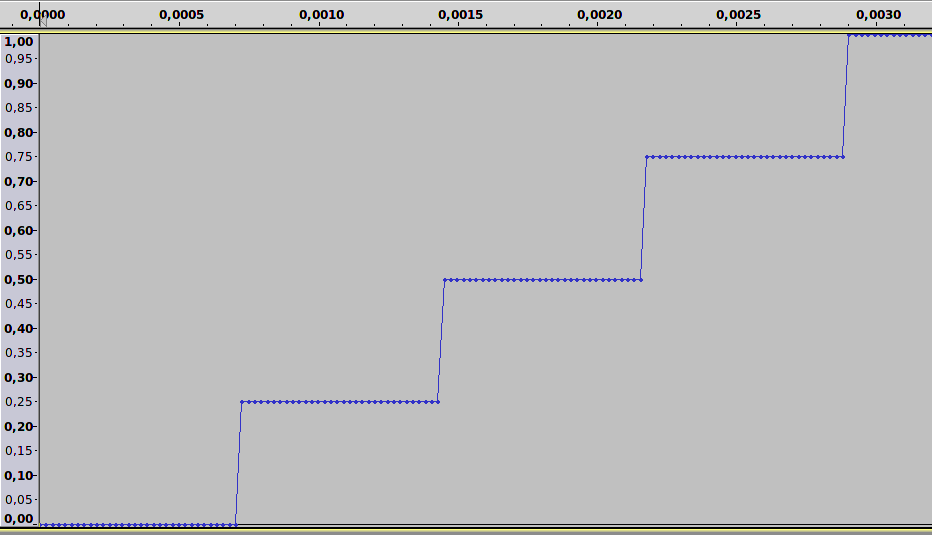

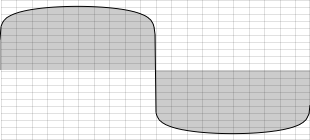

Apart from the sample rate, another important attribute that can affect the fidelity of a digital signal is the accuracy with which each sample is known, its resolution or granularity. Every sample obtained is set to a specific amplitude (the measure of strength for each voltage) level. Each voltage measurement will probably have to be rounded up or down to the nearest digital value available. The number of levels available depends on the precision of the measurement in bits, i.e. how many binary digits are used to store the samples. The number of bits that a system can use is normally referred to as the bit-depth resolution.

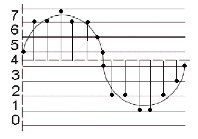

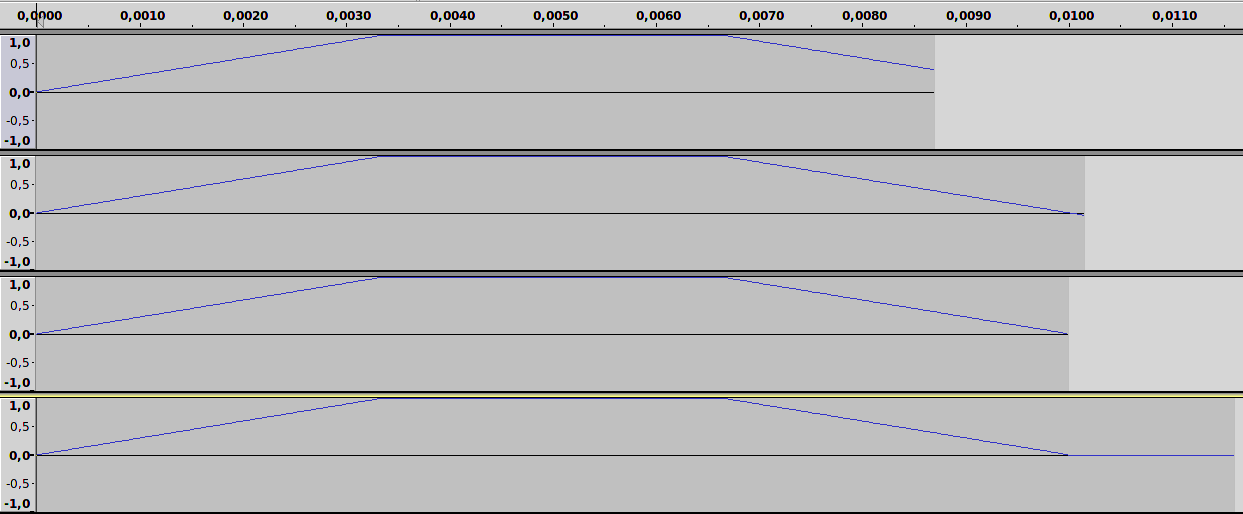

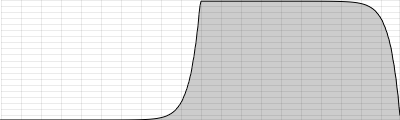

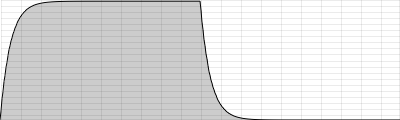

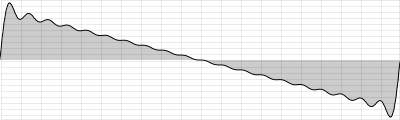

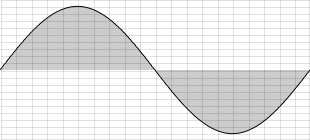

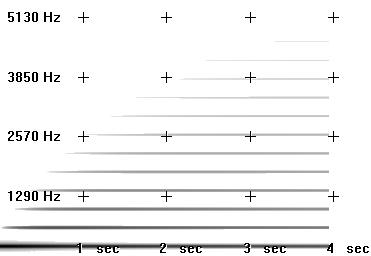

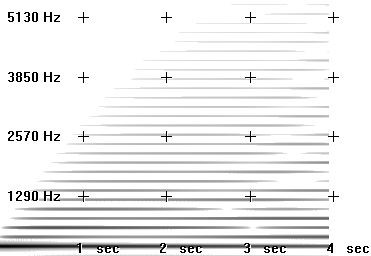

If the bit-depth resolution is 3 then there are 8 possible levels of amplitude that we can use for each sample. We can see this in the diagram below. At each sampling period the soundcard plots an amplitude. As we are only using a 3-bit system the resolution is not good enough to plot the correct amplitude of each sample. We can see in the diagram that some vertical lines stop above or below the real signal. This is because our bit-depth is not high enough to plot the amplitude levels with sufficient accuracy at each sampling period.

The standard resolution for CDs is 16 bit, which allows for 65536 different possible amplitude levels, 32767 either side of the zero axis. Using bit rates lower than 16 is not a good idea as it will result in noise being added to the signal. This is referred to as quantization noise and is a result of amplitude values being excessively rounded up or down when being digitized. Quantization noise becomes most apparent when trying to represent low amplitude (quiet) sounds. Frequently a tiny amount of noise, known as a dither signal, will be added to digital audio before conversion back into an analogue signal. Adding this dither signal will actually reduce the more noticeable noise created by quantization. As higher bit depth resolutions are employed in the digitizing process the need for dithering is reduced. A general rule is to use the highest bit rate available.

Many electronic musicians make use of deliberately low bit depth quantization in order to add noise to a signal. The effect is commonly known as 'bit-crunching' and is relatively easy to implement in Csound.

ADC / DAC

The entire process, as described above, of taking an analogue signal and converting it to a digital signal is referred to as analogue to digital conversion, or ADC. Of course digital to analogue conversion, DAC, is also possible. This is how we get to hear our music through our PC's headphones or speakers. For example, if one plays a sound from Media Player or iTunes the software will send a series of numbers to the computer soundcard. In fact it will most likely send 44100 numbers a second. If the audio that is playing is 16 bit then these numbers will range from -32768 to +32767.

When the sound card receives these numbers from the audio stream it will output corresponding voltages to a loudspeaker. When the voltages reach the loudspeaker they cause the loudspeaker's magnet to move inwards and outwards. This causes a disturbance in the air around the speaker - the compressions and rarefactions introduced at the beginning of this chapter - resulting in what we perceive as sound.

FREQUENCIES

As mentioned in the previous section, frequency is defined as the number of cycles or periods per second. Frequency is measured in Hertz. If a tone has a frequency of 440Hz it completes 440 cycles every second. Given a tone's frequency, one can easily calculate the period of any sound. Mathematically, the period is the reciprocal of the frequency and vice versa. In equation form, this is expressed as follows.

Frequency = 1/Period Period = 1/Frequency

Therefore the frequency is the inverse of the period, so a wave of 100Hz frequency has a period of 1/100 or 0.01 seconds, likewise a frequency of 256Hz has a period of 1/256, or 0.004 seconds. To calculate the wavelength of a sound in any given medium we can use the following equation:

λ = Velocity/Frequency

For instance, a wave of 1000 Hz in air (velocity of diffusion about 340 m/s) has a length of approximately 340/1000 m = 34 cm.

Upper and Lower Limits of Hearing

It is generally stated that the human ear can hear sounds in the range 20Hz to 20,000Hz (20kHz). This upper limit tends to decrease with age due to a condition known as presbyacusis, or age related hearing loss. Most adults can hear to about 16 kHz while most children can hear beyond this. At the lower end of the spectrum the human ear does not respond to frequencies below 20 Hz, with 40 of 50 Hz being the lowest most people can perceive.

So, in the following example, you will not hear the first (10 Hz) tone, and probably not the last (20 kHz) one, but hopefully the other ones (100 Hz, 1000 Hz, 10000 Hz):

EXAMPLE 01B01_LimitsOfHearing.csd

<CsoundSynthesizer>

<CsOptions>

-odac -m0

</CsOptions>

<CsInstruments>

;example by joachim heintz

sr = 44100

ksmps = 32

nchnls = 2

0dbfs = 1

instr 1

prints "Playing %d Hertz!\n", p4

asig oscils .2, p4, 0

outs asig, asig

endin

</CsInstruments>

<CsScore>

i 1 0 2 10

i . + . 100

i . + . 1000

i . + . 10000

i . + . 20000

</CsScore>

</CsoundSynthesizer>

Logarithms, Frequency Ratios and Intervals

A lot of basic maths is about simplification of complex equations. Shortcuts are taken all the time to make things easier to read and equate. Multiplication can be seen as a shorthand for repeated additions, for example, 5x10 = 5+5+5+5+5+5+5+5+5+5. Exponents are shorthand for repeated multiplications, 35 = 3x3x3x3x3. Logarithms are shorthand for exponents and are used in many areas of science and engineering in which quantities vary over a large range. Examples of logarithmic scales include the decibel scale, the Richter scale for measuring earthquake magnitudes and the astronomical scale of stellar brightnesses. Musical frequencies also work on a logarithmic scale; more on this later.

Intervals in music describe the distance between two notes. When dealing with standard musical notation it is easy to determine an interval between two adjacent notes. For example a perfect 5th is always made up of 7 semitones. When dealing with Hz values things are different. A difference of say 100Hz does not always equate to the same musical interval. This is because musical intervals as we hear them are represented in Hz as frequency ratios. An octave for example is always 2:1. That is to say every time you double a Hz value you will jump up by a musical interval of an octave.

Consider the following. A flute can play the note A at 440Hz. If the player plays another A an octave above it at 880 Hz the difference in Hz is 440. Now consider the piccolo, the highest pitched instrument of the orchestra. It can play a frequency of 2000Hz but it can also play an octave above this at 4000Hz (2 x 2000Hz). While the difference in Hertz between the two notes on the flute is only 440Hz, the difference between the two high pitched notes on a piccolo is 1000Hz yet they are both only playing notes one octave apart.

What all this demonstrates is that the higher two pitches become, the greater the difference in Hertz required for us to recognize the spacing as the same musical interval. We can use simple ratios to represent a number of familiar intervals; for example the unison: (1:1), the octave: (2:1), the perfect fifth (3:2), the perfect fourth (4:3), the major third (5:4) and the minor third (6:5); but it should be noted that most of these intervals are only represented with absolute precision when using just intonation. In equal temperament, the dominant method used in the tuning of many instruments, only unison and the octave are represented with these precise ratios.

The following example shows the difference between adding a certain frequency and applying a ratio. First, the frequencies of 100, 400 and 800 Hz all get an addition of 100 Hz. This sounds very different, though the added frequency is the same. Second, the ratio 3/2 (perfect fifth) is applied to the same frequencies. This spacing sounds constant, although the frequency displacement is different each time.

EXAMPLE 01B02_Adding_vs_ratio.csd

<CsoundSynthesizer>

<CsOptions>

--env:SSDIR+=../SourceMaterials -odac -m0

</CsOptions>

<CsInstruments>

;example by joachim heintz

sr = 44100

ksmps = 32

nchnls = 2

0dbfs = 1

instr 1

prints "Playing %d Hertz!\n", p4

asig oscils .2, p4, 0

outs asig, asig

endin

instr 2

prints "Adding %d Hertz to %d Hertz!\n", p5, p4

asig oscils .2, p4+p5, 0

outs asig, asig

endin

instr 3

prints "Applying the ratio of %f (adding %d Hertz) to %d Hertz!\n", p5, p4*p5, p4

asig oscils .2, p4*p5, 0

outs asig, asig

endin

</CsInstruments>

<CsScore>

;adding a certain frequency (instr 2)

i 1 0 1 100

i 2 1 1 100 100

i 1 3 1 400

i 2 4 1 400 100

i 1 6 1 800

i 2 7 1 800 100

;applying a certain ratio (instr 3)

i 1 10 1 100

i 3 11 1 100 [3/2]

i 1 13 1 400

i 3 14 1 400 [3/2]

i 1 16 1 800

i 3 17 1 800 [3/2]

</CsScore>

</CsoundSynthesizer>

So what of the algorithms mentioned above. As some readers will know the current preferred method of tuning western instruments is based on equal temperament. Essentially this means that all octaves are split into 12 equal intervals. Therefore a semitone has a ratio of 2(1/12), which is approximately 1.059463.

So what about the reference to logarithms in the heading above? As stated previously, logarithms are shorthand for exponents. 2(1/12)= 1.059463 can also be written as log2(1.059463)= 1/12. Therefore musical frequency works on a logarithmic scale.

MIDI Notes

Csound can easily deal with MIDI notes and comes with functions that will convert MIDI notes to Hertz values and back again. In MIDI speak A440 is equal to A4 and is MIDI note 69. You can think of A4 as being the fourth A from the lowest A we can hear; well, almost hear.

Caution: like many 'standards' there is occasional disagreement about the mapping between frequency and octave number. You may occasionally encounter A440 being described as A3.

INTENSITIES

Real World Intensities and Amplitudes

There are many ways to describe a sound physically. One of the most common is the sound intensity level (SIL). It describes the amount of power on a certain surface, so its unit is Watts per square meter (). The range of human hearing is about

at the threshold of hearing to

at the threshold of pain. For ordering this immense range, and to facilitate the measurement of one sound intensity based upon its ratio with another, a logarithmic scale is used. The unit Bel describes the relation of one intensity I to a reference intensity I0 as follows:

Sound Intensity Level in Bel

If, for example, the ratio is 10, this is 1 Bel. If the ratio is 100, this is 2 Bel.

For real world sounds, it makes sense to set the reference value to the threshold of hearing which has been fixed as

at 1000 Hertz. So the range of human hearing covers about 12 Bel. Usually 1 Bel is divided into 10 decibel, so the common formula for measuring a sound intensity is:

sound intensity level (SIL) in decibel (dB) with

While the sound intensity level is useful in describing the way in which human hearing works, the measurement of sound is more closely related to the sound pressure deviations. Sound waves compress and expand the air particles and by this they increase and decrease the localized air pressure. These deviations are measured and transformed by a microphone. The question arises: what is the relationship between the sound pressure deviations and the sound intensity? The answer is: sound intensity changes are proportional to the square of the sound pressure changes

. As a formula:

Relation between Sound Intensity and Sound Pressure

Let us take an example to see what this means. The sound pressure at the threshold of hearing can be fixed at . This value is the reference value of the Sound Pressure Level (SPL). If we now have a value of

, the corresponding sound intensity relationship can be calculated as:

Therefore a factor of 10 in a pressure relationship yields a factor of 100 in the intensity relationship. In general, the dB scale for the pressure P related to the pressure P0 is:

Sound pressure level (SPL) in decibels (dB) with

Working with digital audio means working with amplitudes. Any audio file is a sequence of amplitudes. What you generate in Csound and write either to the DAC in realtime or to a sound file, are again nothing but a sequence of amplitudes. As amplitudes are directly related to the sound pressure deviations, all the relationships between sound intensity and sound pressure can be transferred to relationships between sound intensity and amplitudes:

Relationship between intensity and amplitudes

Decibel (dB) scale of amplitudes with any amplitude

related to another amplitude

If you drive an oscillator with an amplitude of 1, and another oscillator with an amplitude of 0.5 and you want to know the difference in dB, you can calculate this as follows:

The most useful thing to bear in mind is that when you double an amplitude this will provide a change of +6 dB, or when you have halve an amplitude this will provide a change in of -6 dB.

What is 0 dB?

As described in the last section, any dB scale - for intensities, pressures or amplitudes - is just a way to describe a relationship. To have any sort of quantitative measurement you will need to know the reference value referred to as "0 dB". For real world sounds, it makes sense to set this level to the threshold of hearing. This is done, as we saw, by setting the SIL to and the SPL to

.

When working with digital sound within a computer, this method for defining 0dB will not make any sense. The loudness of the sound produced in the computer will ultimately depend on the amplification and the speakers, and the amplitude level set in your audio editor or in Csound will only apply an additional, and not an absolute, sound level control. Nevertheless, there is a rational reference level for the amplitudes. In a digital system, there is a strict limit for the maximum number you can store as amplitude. This maximum possible level is normally used as the reference point for 0 dB.

Each program connects this maximum possible amplitude with a number. Usually it is '1' which is a good choice, because you know that everything above 1 is clipping, and you have a handy relation for lower values. But actually this value is nothing but a setting, and in Csound you are free to set it to any value you like via the 0dbfs opcode. Usually you should use this statement in the orchestra header:

0dbfs = 1

This means: "Set the level for zero dB as full scale to 1 as reference value." Note that for historical reasons the default value in Csound is not 1 but 32768. So you must have this 0dbfs=1 statement in your header if you want to use the amplitude convention used by most modern audio programming environments.

dB Scale Versus Linear Amplitude

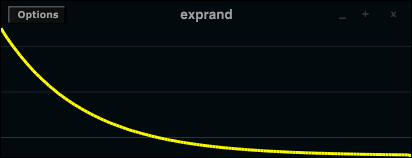

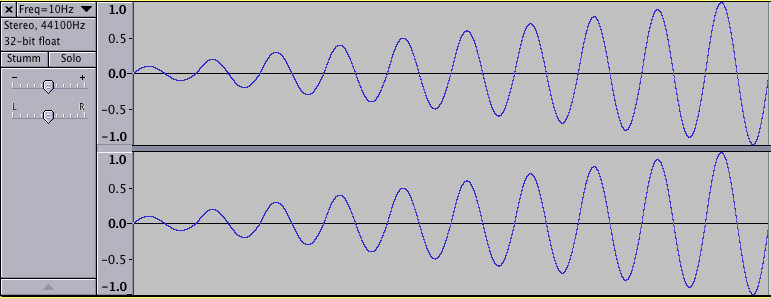

Now we will consider some practical consequences of what we have discussed so far. One major point is that for achieving perceivably smooth changes across intensity levels you must not use a simple linear transition of the amplitudes, but a linear transition of the dB equivalent. The following example shows a linear rise of the amplitudes from 0 to 1, and then a linear rise of the dB's from -80 to 0 dB, both over 10 seconds.

EXAMPLE 01C01_db_vs_linear.csd

<CsoundSynthesizer>

<CsOptions>

-odac

</CsOptions>

<CsInstruments>

;example by joachim heintz

sr = 44100

ksmps = 32

nchnls = 2

0dbfs = 1

instr 1 ;linear amplitude rise

kamp line 0, p3, 1 ;amp rise 0->1

asig oscils 1, 1000, 0 ;1000 Hz sine

aout = asig * kamp

outs aout, aout

endin

instr 2 ;linear rise of dB

kdb line -80, p3, 0 ;dB rise -80 -> 0

asig oscils 1, 1000, 0 ;1000 Hz sine

kamp = ampdb(kdb) ;transformation db -> amp

aout = asig * kamp

outs aout, aout

endin

</CsInstruments>

<CsScore>

i 1 0 10

i 2 11 10

</CsScore>

</CsoundSynthesizer>

The first note, which employs a linear rise in amplitude, is perceived as rising quickly in intensity with the rate of increase slowing quickly. The second note, which employs a linear rise in decibels, is perceived as a more constant rise in intensity.

RMS Measurement

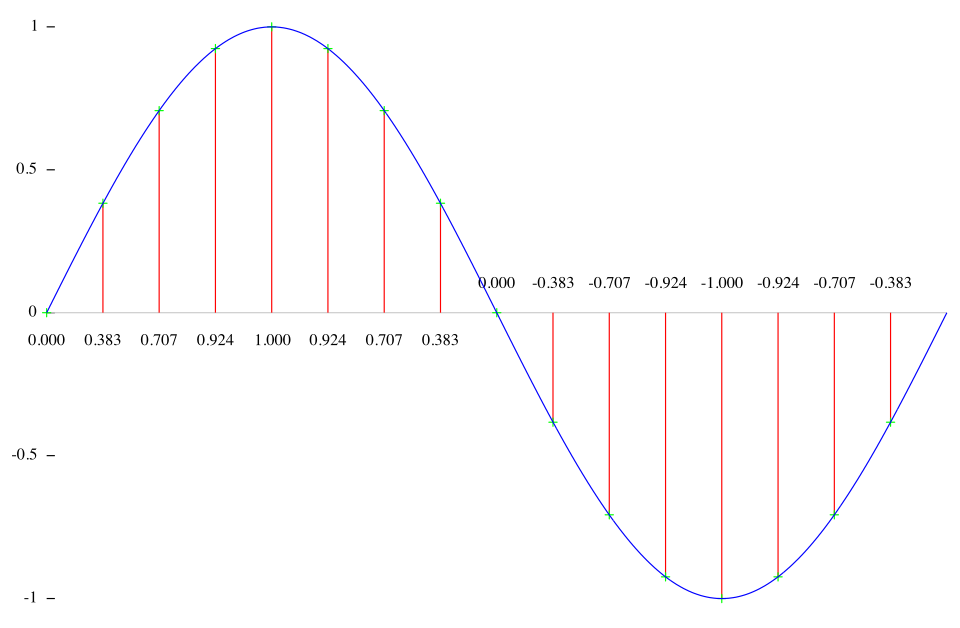

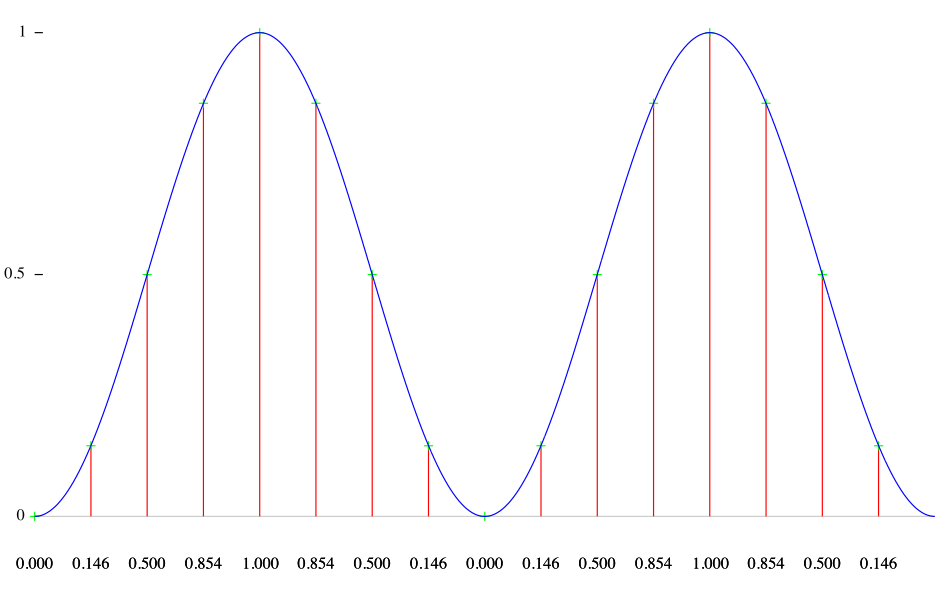

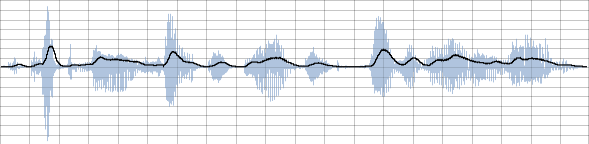

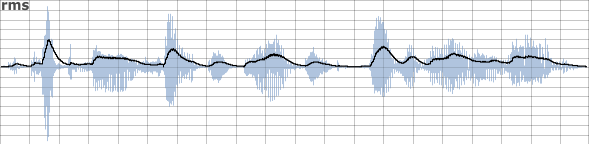

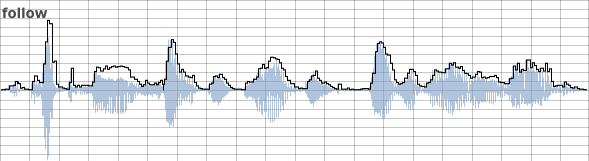

Sound intensity depends on many factors. One of the most important is the effective mean of the amplitudes in a certain time span. This is called the Root Mean Square (RMS) value. To calculate it, you have (1) to calculate the squared amplitudes of number N samples. Then you (2) divide the result by N to calculate the mean of it. Finally (3) take the square root.

Let us consider a simple example and then look how to derive rms values within Csound. Assuming we have a sine wave which consists of 16 samples, we get these amplitudes:

These are the squared amplitudes:

The mean of these values is:

(0+0.146+0.5+0.854+1+0.854+0.5+0.146+0+0.146+0.5+0.854+1+0.854+0.5+0.146)/16=8/16=0.5

And the resulting RMS value is sqrt(0.5) = 0.707 .

The rms opcode in Csound calculates the RMS power in a certain time span, and smoothes the values in time according to the ihp parameter: the higher this value is (the default is 10 Hz), the quicker this measurement will respond to changes, and vice versa. This opcode can be used to implement a self-regulating system, in which the rms opcode prevents the system from exploding. Each time the rms value exceeds a certain value, the amount of feedback is reduced. This is an example1 :

EXAMPLE 01C02_rms_feedback_system.csd

<CsoundSynthesizer>

<CsOptions>

-odac

</CsOptions>

<CsInstruments>

;example by Martin Neukom, adapted by Joachim Heintz

sr = 44100

ksmps = 32

nchnls = 2

0dbfs = 1

giSine ftgen 0, 0, 2^10, 10, 1 ;table with a sine wave

instr 1

a3 init 0

kamp linseg 0, 1.5, 0.2, 1.5, 0 ;envelope for initial input

asnd poscil kamp, 440, giSine ;initial input

if p4 == 1 then ;choose between two sines ...

adel1 poscil 0.0523, 0.023, giSine

adel2 poscil 0.073, 0.023, giSine,.5

else ;or a random movement for the delay lines

adel1 randi 0.05, 0.1, 2

adel2 randi 0.08, 0.2, 2

endif

a0 delayr 1 ;delay line of 1 second

a1 deltapi adel1 + 0.1 ;first reading

a2 deltapi adel2 + 0.1 ;second reading

krms rms a3 ;rms measurement

delayw asnd + exp(-krms) * a3 ;feedback depending on rms

a3 reson -(a1+a2), 3000, 7000, 2 ;calculate a3

aout linen a1/3, 1, p3, 1 ;apply fade in and fade out

outs aout, aout

endin

</CsInstruments>

<CsScore>

i 1 0 60 1 ;two sine movements of delay with feedback

i 1 61 . 2 ;two random movements of delay with feedback

</CsScore>

</CsoundSynthesizer>

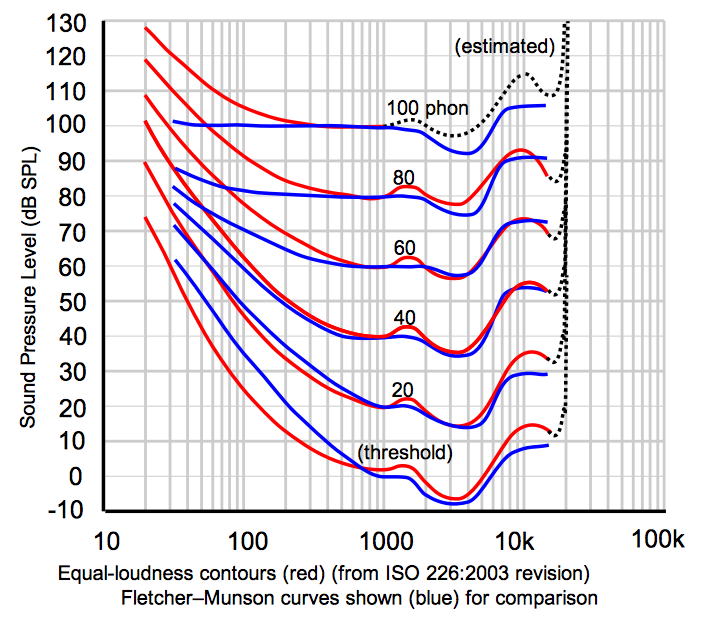

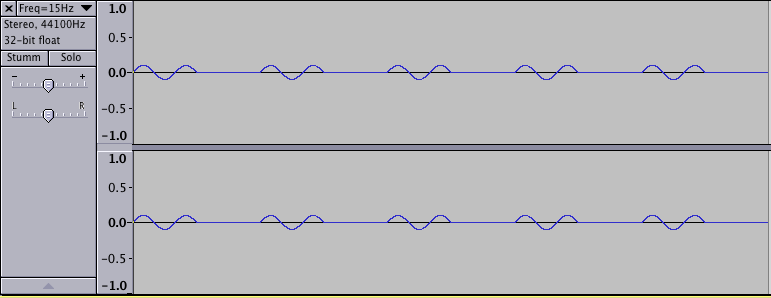

Fletcher-Munson Curves

The range of human hearing is roughly from 20 to 20000 Hz, but within this range, the hearing is not equally sensitive to intensity. The most sensitive region is around 3000 Hz. If a sound is operating in the upper or lower limits of this range, it will need greater intensity in order to be perceived as equally loud.

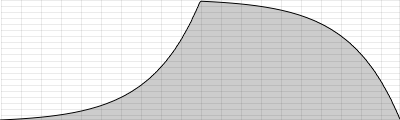

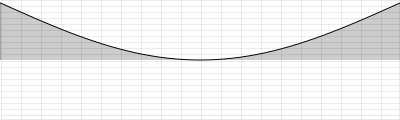

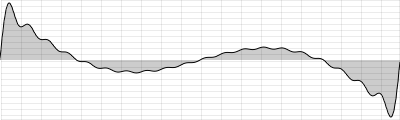

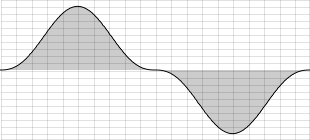

These curves of equal loudness are mostly called "Fletcher-Munson Curves" because of the paper of H. Fletcher and W. A. Munson in 1933. They look like this:

Try the following test. During the first 5 seconds you will hear a tone of 3000 Hz. Adjust the level of your amplifier to the lowest possible level at which you still can hear the tone. Next you hear a tone whose frequency starts at 20 Hertz and ends at 20000 Hertz, over 20 seconds. Try to move the fader or knob of your amplification exactly in a way that you still can hear anything, but as soft as possible. The movement of your fader should roughly be similar to the lowest Fletcher-Munson-Curve: starting relatively high, going down and down until 3000 Hertz, and then up again. Of course, this effectiveness of this test will also depend upon the quality of your speaker hardware. If your speakers do not provide adequate low frequency response, you will not hear anything in the bass region.

EXAMPLE 01C03_FletcherMunson.csd

<CsoundSynthesizer>

<CsOptions>

-odac

</CsOptions>

<CsInstruments>

sr = 44100

ksmps = 32

nchnls = 2

0dbfs = 1

giSine ftgen 0, 0, 2^10, 10, 1 ;table with a sine wave

instr 1

kfreq expseg p4, p3, p5

printk 1, kfreq ;prints the frequencies once a second

asin poscil .2, kfreq, giSine

aout linen asin, .01, p3, .01

outs aout, aout

endin

</CsInstruments>

<CsScore>

i 1 0 5 1000 1000

i 1 6 20 20 20000

</CsScore>

</CsoundSynthesizer>

It is very important to bear in mind when designing instruments that the perceived loudness of a sound will depend upon its frequency content. You must remain aware that projecting a 30 Hz sine at a certain amplitude will be perceived differently to a 3000 Hz sine at the same amplitude; the latter will sound much louder.

- cf Martin Neukom, Signale Systeme Klangsynthese, Zürich 2003, p. 383^

RANDOM

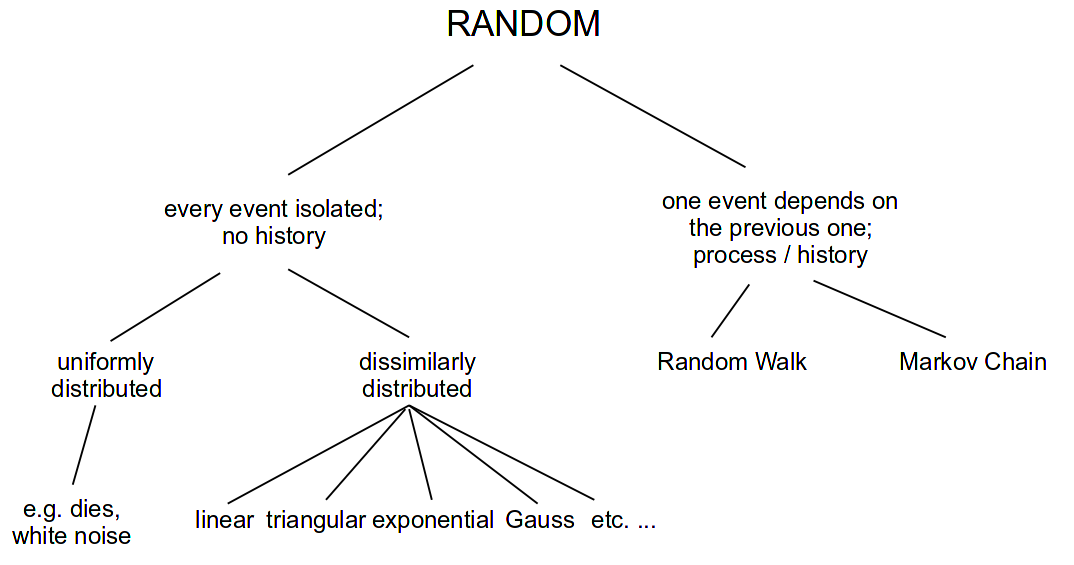

This chapter is in three parts. Part I provides a general introduction to the concepts behind random numbers and how to work with them in Csound. Part II focusses on a more mathematical approach. Part III introduces a number of opcodes for generating random numbers, functions and distributions and demonstrates their use in musical examples.

I. GENERAL INTRODUCTION

Random is Different

The term random derives from the idea of a horse that is running so fast it becomes 'out of control' or 'beyond predictability'.1 Yet there are different ways in which to run fast and to be out of control; therefore there are different types of randomness.

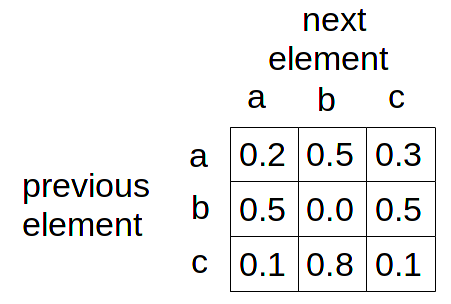

We can divide types of randomness into two classes. The first contains random events that are independent of previous events. The most common example for this is throwing a die. Even if you have just thrown three '1's in a row, when thrown again, a '1' has the same probability as before (and as any other number). The second class of random number involves random events which depend in some way upon previous numbers or states. Examples here are Markov chains and random walks.

The use of randomness in electronic music is widespread. In this chapter, we shall try to explain how the different random horses are moving, and how you can create and modify them on your own. Moreover, there are many pre-built random opcodes in Csound which can be used out of the box (see the overview in the Csound Manual). The final section of this chapter introduces some musically interesting applications of them.

Random Without History

A computer is typically only capable of computation. Computations are deterministic processes: one input will always generate the same output, but a random event is not predictable. To generate something which looks like a random event, the computer uses a pseudo-random generator.

The pseudo-random generator takes one number as input, and generates another number as output. This output is then the input for the next generation. For a huge amount of numbers, they look as if they are randomly distributed, although everything depends on the first input: the seed. For one given seed, the next values can be predicted.

Uniform Distribution

The output of a classical pseudo-random generator is uniformly distributed: each value in a given range has the same likelihood of occurence. The first example shows the influence of a fixed seed (using the same chain of numbers and beginning from the same location in the chain each time) in contrast to a seed being taken from the system clock (the usual way of imitating unpredictability). The first three groups of four notes will always be the same because of the use of the same seed whereas the last three groups should always have a different pitch.

EXAMPLE 01D01_different_seed.csd

<CsoundSynthesizer>

<CsOptions>

-d -odac -m0

</CsOptions>

<CsInstruments>

sr = 44100

ksmps = 32

nchnls = 2

0dbfs = 1

instr generate

;get seed: 0 = seeding from system clock

; otherwise = fixed seed

seed p4

;generate four notes to be played from subinstrument

iNoteCount = 0

until iNoteCount == 4 do

iFreq random 400, 800

event_i "i", "play", iNoteCount, 2, iFreq

iNoteCount += 1 ;increase note count

enduntil

endin

instr play

iFreq = p4

print iFreq

aImp mpulse .5, p3

aMode mode aImp, iFreq, 1000

aEnv linen aMode, 0.01, p3, p3-0.01

outs aEnv, aEnv

endin

</CsInstruments>

<CsScore>

;repeat three times with fixed seed

r 3

i "generate" 0 2 1

;repeat three times with seed from the system clock

r 3

i "generate" 0 1 0

</CsScore>

</CsoundSynthesizer>

;example by joachim heintz

Note that a pseudo-random generator will repeat its series of numbers after as many steps as are given by the size of the generator. If a 16-bit number is generated, the series will be repeated after 65536 steps. If you listen carefully to the following example, you will hear a repetition in the structure of the white noise (which is the result of uniformly distributed amplitudes) after about 1.5 seconds in the first note.2 In the second note, there is no perceivable repetition as the random generator now works with a 31-bit number.

EXAMPLE 01D02_white_noises.csd

<CsoundSynthesizer>

<CsOptions>

-d -odac

</CsOptions>

<CsInstruments>

sr = 44100

ksmps = 32

nchnls = 2

0dbfs = 1

instr white_noise

iBit = p4 ;0 = 16 bit, 1 = 31 bit

;input of rand: amplitude, fixed seed (0.5), bit size

aNoise rand .1, 0.5, iBit

outs aNoise, aNoise

endin

</CsInstruments>

<CsScore>

i "white_noise" 0 10 0

i "white_noise" 11 10 1

</CsScore>

</CsoundSynthesizer>

;example by joachim heintz

Two more general notes about this:

- The way to set the seed differs from opcode to opcode. There are several opcodes such as rand featured above, which offer the choice of setting a seed as input parameter. For others, such as the frequently used random family, the seed can only be set globally via the seed statement. This is usually done in the header so a typical statement would be:

<CsInstruments> sr = 44100 ksmps = 32 nchnls = 2 0dbfs = 1 seed = 0 ;seeding from current time

...

- Random number generation in Csound can be done at any rate. The type of the output variable tells you whether you are generating random values at i-, k- or a-rate. Many random opcodes can work at all these rates, for instance random:

1) ires random imin, imax 2) kres random kmin, kmax 3) ares random kmin, kmax

In the first case, a random value is generated only once, when an instrument is called, at initialisation. The generated value is then stored in the variable ires. In the second case, a random value is generated at each k-cycle, and stored in kres. In the third case, in each k-cycle as many random values are stored as the audio vector has in size, and stored in the variable ares. Have a look at example 03A16_Random_at_ika.csd to see this at work. Chapter 03A tries to explain the background of the different rates in depth, and how to work with them.

Other Distributions

The uniform distribution is the one each computer can output via its pseudo-random generator. But there are many situations you will not want a uniformly distributed random, but any other shape. Some of these shapes are quite common, but you can actually build your own shapes quite easily in Csound. The next examples demonstrate how to do this. They are based on the chapter in Dodge/Jerse3 which also served as a model for many random number generator opcodes in Csound.4

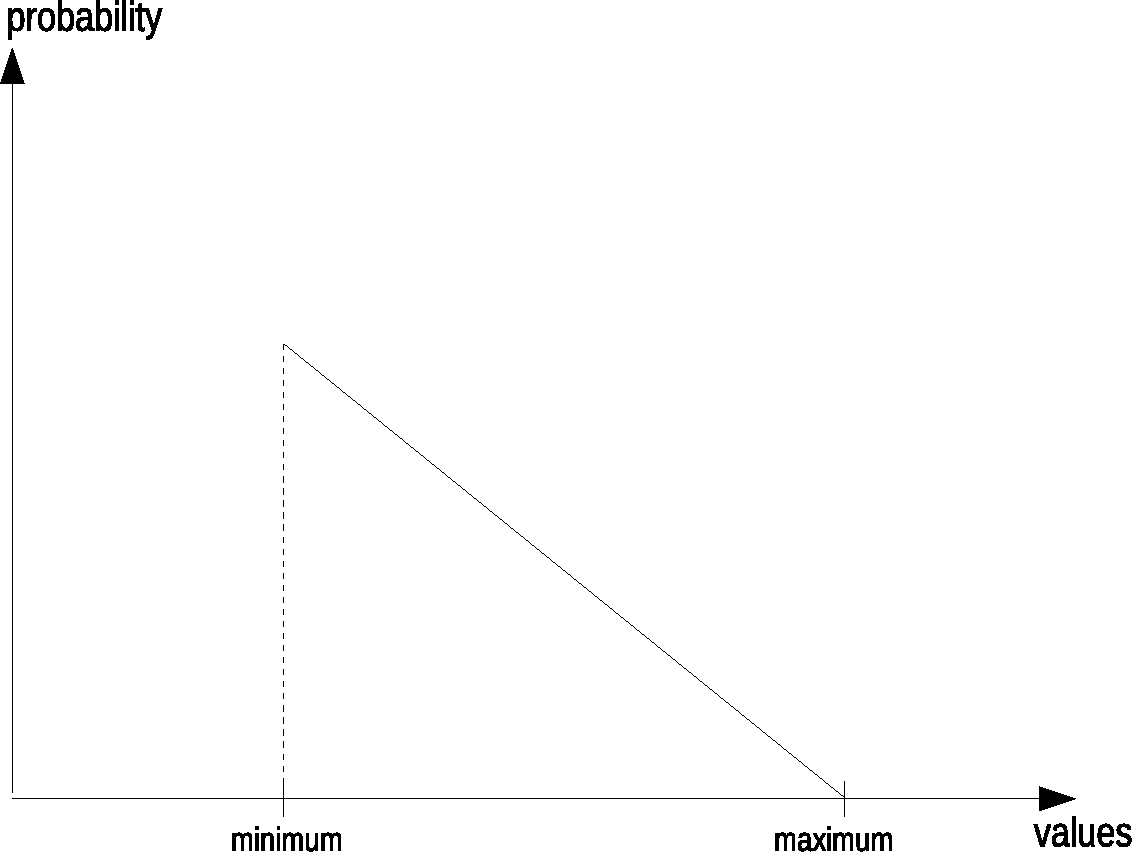

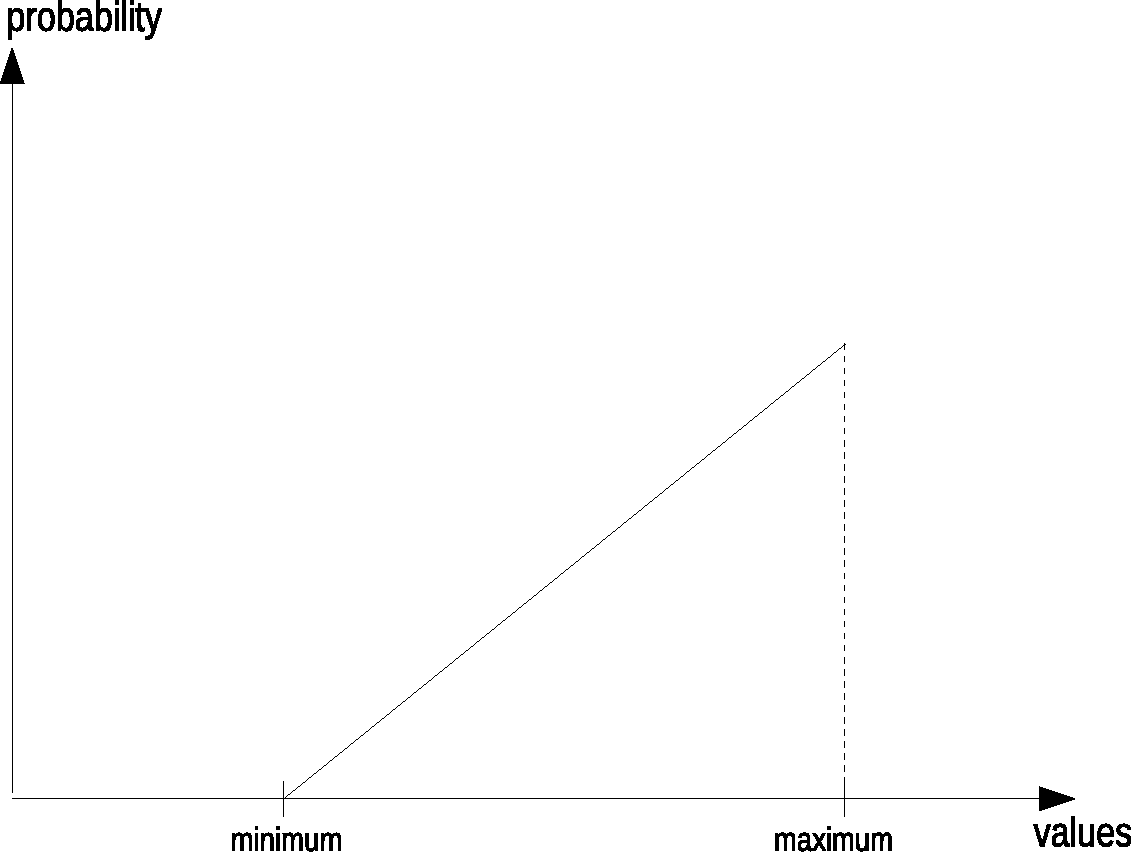

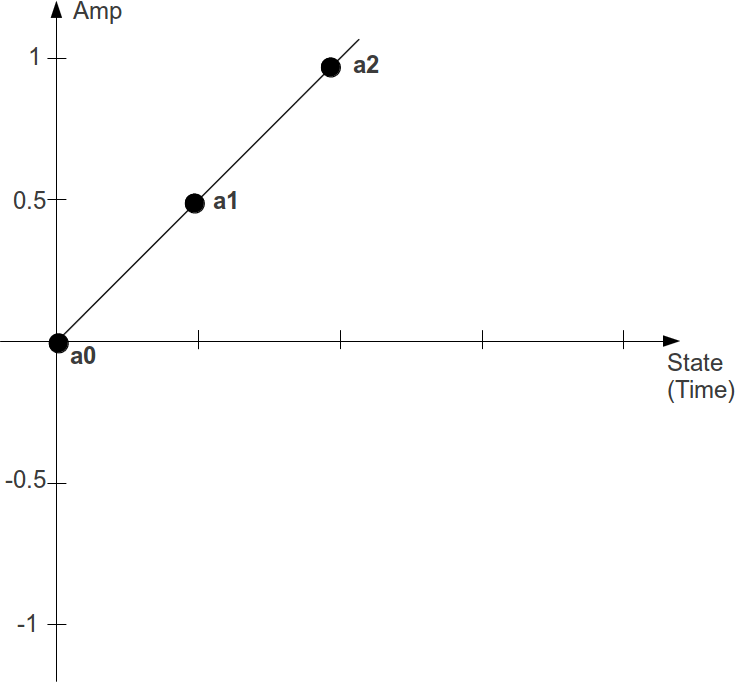

Linear

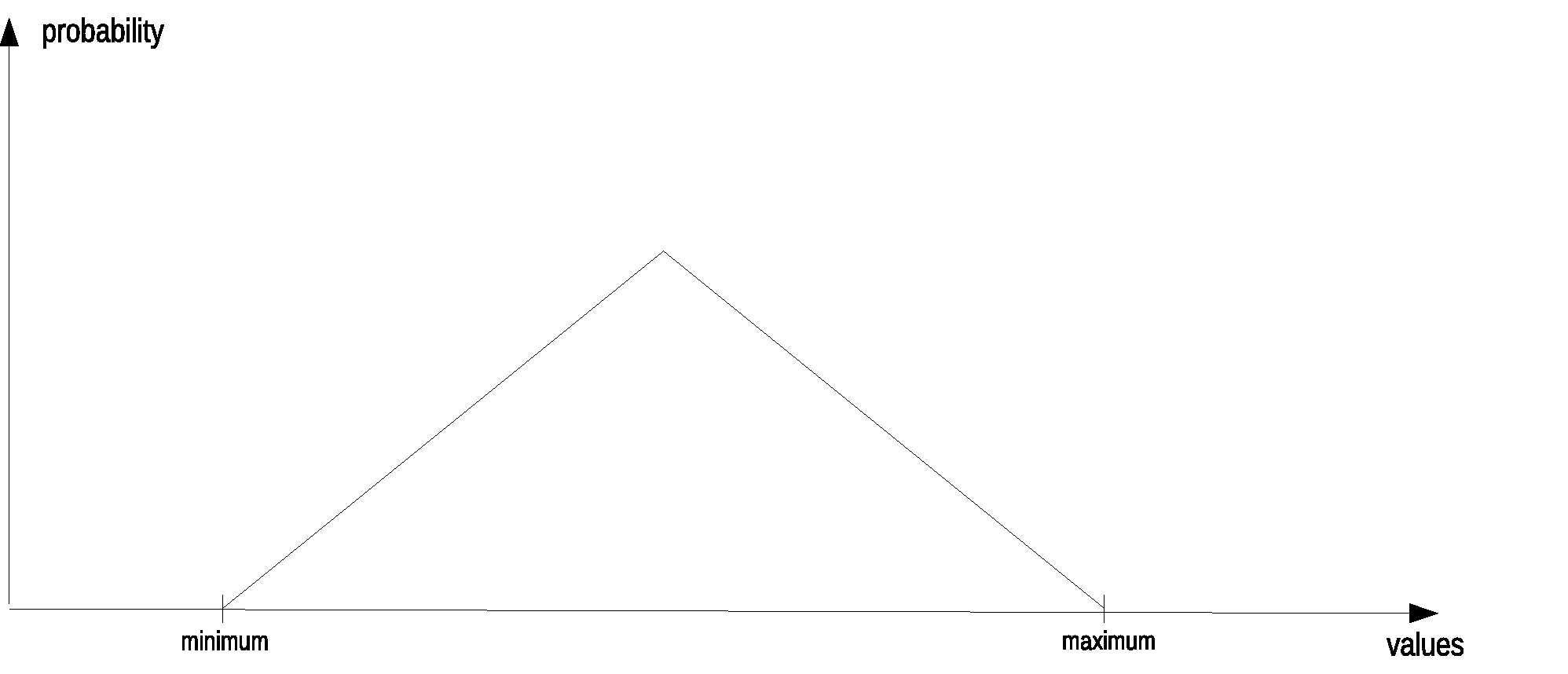

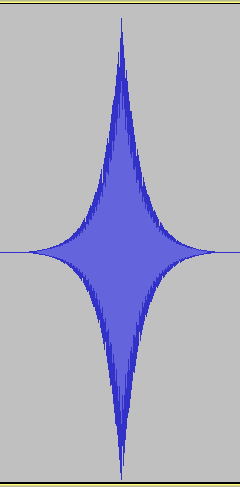

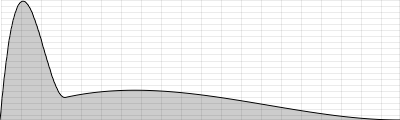

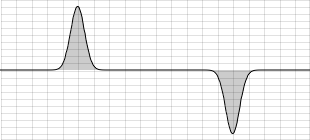

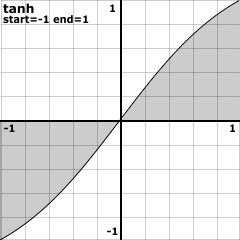

A linear distribution means that either lower or higher values in a given range are more likely:

To get this behaviour, two uniform random numbers are generated, and the lower is taken for the first shape. If the second shape with the precedence of higher values is needed, the higher one of the two generated numbers is taken. The next example implements these random generators as User Defined Opcodes. First we hear a uniform distribution, then a linear distribution with precedence of lower pitches (but longer durations), at least a linear distribution with precedence of higher pitches (but shorter durations).

EXAMPLE 01D03_linrand.csd

<CsoundSynthesizer>

<CsOptions>

-d -odac -m0

</CsOptions>

<CsInstruments>

sr = 44100

ksmps = 32

nchnls = 2

0dbfs = 1

seed 0

;****DEFINE OPCODES FOR LINEAR DISTRIBUTION****

opcode linrnd_low, i, ii

;linear random with precedence of lower values

iMin, iMax xin

;generate two random values with the random opcode

iOne random iMin, iMax

iTwo random iMin, iMax

;compare and get the lower one

iRnd = iOne < iTwo ? iOne : iTwo

xout iRnd

endop

opcode linrnd_high, i, ii

;linear random with precedence of higher values

iMin, iMax xin

;generate two random values with the random opcode

iOne random iMin, iMax

iTwo random iMin, iMax

;compare and get the higher one

iRnd = iOne > iTwo ? iOne : iTwo

xout iRnd

endop

;****INSTRUMENTS FOR THE DIFFERENT DISTRIBUTIONS****

instr notes_uniform

prints "... instr notes_uniform playing:\n"

prints "EQUAL LIKELINESS OF ALL PITCHES AND DURATIONS\n"

;how many notes to be played

iHowMany = p4

;trigger as many instances of instr play as needed

iThisNote = 0

iStart = 0

until iThisNote == iHowMany do

iMidiPch random 36, 84 ;midi note

iDur random .5, 1 ;duration

event_i "i", "play", iStart, iDur, int(iMidiPch)

iStart += iDur ;increase start

iThisNote += 1 ;increase counter

enduntil

;reset the duration of this instr to make all events happen

p3 = iStart + 2

;trigger next instrument two seconds after the last note

event_i "i", "notes_linrnd_low", p3, 1, iHowMany

endin

instr notes_linrnd_low

prints "... instr notes_linrnd_low playing:\n"

prints "LOWER NOTES AND LONGER DURATIONS PREFERRED\n"

iHowMany = p4

iThisNote = 0

iStart = 0

until iThisNote == iHowMany do

iMidiPch linrnd_low 36, 84 ;lower pitches preferred

iDur linrnd_high .5, 1 ;longer durations preferred

event_i "i", "play", iStart, iDur, int(iMidiPch)

iStart += iDur

iThisNote += 1

enduntil

;reset the duration of this instr to make all events happen

p3 = iStart + 2

;trigger next instrument two seconds after the last note

event_i "i", "notes_linrnd_high", p3, 1, iHowMany

endin

instr notes_linrnd_high

prints "... instr notes_linrnd_high playing:\n"

prints "HIGHER NOTES AND SHORTER DURATIONS PREFERRED\n"

iHowMany = p4

iThisNote = 0

iStart = 0

until iThisNote == iHowMany do

iMidiPch linrnd_high 36, 84 ;higher pitches preferred

iDur linrnd_low .3, 1.2 ;shorter durations preferred

event_i "i", "play", iStart, iDur, int(iMidiPch)

iStart += iDur

iThisNote += 1

enduntil

;reset the duration of this instr to make all events happen

p3 = iStart + 2

;call instr to exit csound

event_i "i", "exit", p3+1, 1

endin

;****INSTRUMENTS TO PLAY THE SOUNDS AND TO EXIT CSOUND****

instr play

;increase duration in random range

iDur random p3, p3*1.5

p3 = iDur

;get midi note and convert to frequency

iMidiNote = p4

iFreq cpsmidinn iMidiNote

;generate note with karplus-strong algorithm

aPluck pluck .2, iFreq, iFreq, 0, 1

aPluck linen aPluck, 0, p3, p3

;filter

aFilter mode aPluck, iFreq, .1

;mix aPluck and aFilter according to MidiNote

;(high notes will be filtered more)

aMix ntrpol aPluck, aFilter, iMidiNote, 36, 84

;panning also according to MidiNote

;(low = left, high = right)

iPan = (iMidiNote-36) / 48

aL, aR pan2 aMix, iPan

outs aL, aR

endin

instr exit

exitnow

endin

</CsInstruments>

<CsScore>

i "notes_uniform" 0 1 23 ;set number of notes per instr here

;instruments linrnd_low and linrnd_high are triggered automatically

e 99999 ;make possible to perform long (exit will be automatically)

</CsScore>

</CsoundSynthesizer>

;example by joachim heintz

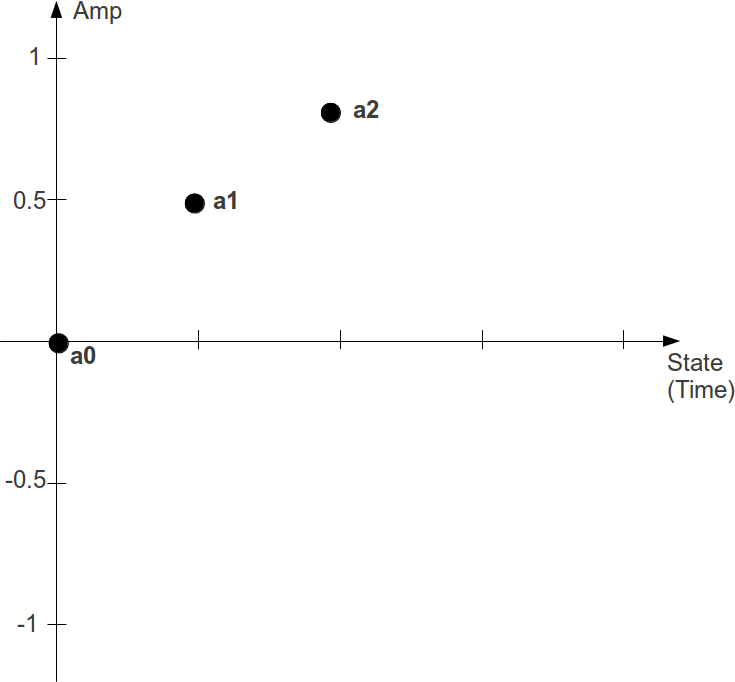

Triangular

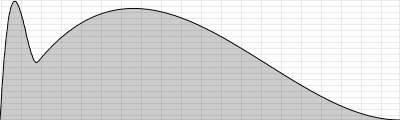

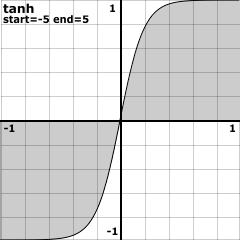

In a triangular distribution the values in the middle of the given range are more likely than those at the borders. The probability transition between the middle and the extrema are linear:

The algorithm for getting this distribution is very simple as well. Generate two uniform random numbers and take the mean of them. The next example shows the difference between uniform and triangular distribution in the same environment as the previous example.

EXAMPLE 01D04_trirand.csd

<CsoundSynthesizer>

<CsOptions>

-d -odac -m0

</CsOptions>

<CsInstruments>

sr = 44100

ksmps = 32

nchnls = 2

0dbfs = 1

seed 0

;****UDO FOR TRIANGULAR DISTRIBUTION****

opcode trirnd, i, ii

iMin, iMax xin

;generate two random values with the random opcode

iOne random iMin, iMax

iTwo random iMin, iMax

;get the mean and output

iRnd = (iOne+iTwo) / 2

xout iRnd

endop

;****INSTRUMENTS FOR UNIFORM AND TRIANGULAR DISTRIBUTION****

instr notes_uniform

prints "... instr notes_uniform playing:\n"

prints "EQUAL LIKELINESS OF ALL PITCHES AND DURATIONS\n"

;how many notes to be played

iHowMany = p4

;trigger as many instances of instr play as needed

iThisNote = 0

iStart = 0

until iThisNote == iHowMany do

iMidiPch random 36, 84 ;midi note

iDur random .25, 1.75 ;duration

event_i "i", "play", iStart, iDur, int(iMidiPch)

iStart += iDur ;increase start

iThisNote += 1 ;increase counter

enduntil

;reset the duration of this instr to make all events happen

p3 = iStart + 2

;trigger next instrument two seconds after the last note

event_i "i", "notes_trirnd", p3, 1, iHowMany

endin

instr notes_trirnd

prints "... instr notes_trirnd playing:\n"

prints "MEDIUM NOTES AND DURATIONS PREFERRED\n"

iHowMany = p4

iThisNote = 0

iStart = 0

until iThisNote == iHowMany do

iMidiPch trirnd 36, 84 ;medium pitches preferred

iDur trirnd .25, 1.75 ;medium durations preferred

event_i "i", "play", iStart, iDur, int(iMidiPch)

iStart += iDur

iThisNote += 1

enduntil

;reset the duration of this instr to make all events happen

p3 = iStart + 2

;call instr to exit csound

event_i "i", "exit", p3+1, 1

endin

;****INSTRUMENTS TO PLAY THE SOUNDS AND EXIT CSOUND****

instr play

;increase duration in random range

iDur random p3, p3*1.5

p3 = iDur

;get midi note and convert to frequency

iMidiNote = p4

iFreq cpsmidinn iMidiNote

;generate note with karplus-strong algorithm

aPluck pluck .2, iFreq, iFreq, 0, 1

aPluck linen aPluck, 0, p3, p3

;filter

aFilter mode aPluck, iFreq, .1

;mix aPluck and aFilter according to MidiNote

;(high notes will be filtered more)

aMix ntrpol aPluck, aFilter, iMidiNote, 36, 84

;panning also according to MidiNote

;(low = left, high = right)

iPan = (iMidiNote-36) / 48

aL, aR pan2 aMix, iPan

outs aL, aR

endin

instr exit

exitnow

endin

</CsInstruments>

<CsScore>

i "notes_uniform" 0 1 23 ;set number of notes per instr here

;instr trirnd will be triggered automatically

e 99999 ;make possible to perform long (exit will be automatically)

</CsScore>

</CsoundSynthesizer>

;example by joachim heintz

More Linear and Triangular

Having written this with some very simple UDOs, it is easy to emphasise the probability peaks of the distributions by generating more than two random numbers. If you generate three numbers and choose the smallest of them, you will get many more numbers near the minimum in total for the linear distribution. If you generate three random numbers and take the mean of them, you will end up with more numbers near the middle in total for the triangular distribution.

If we want to write UDOs with a flexible number of sub-generated numbers, we have to write the code in a slightly different way. Instead of having one line of code for each random generator, we will use a loop, which calls the generator as many times as we wish to have units. A variable will store the results of the accumulation. Re-writing the above code for the UDO trirnd would lead to this formulation:

opcode trirnd, i, ii

iMin, iMax xin

;set a counter and a maximum count

iCount = 0

iMaxCount = 2

;set the accumulator to zero as initial value

iAccum = 0

;perform loop and accumulate

until iCount == iMaxCount do

iUniRnd random iMin, iMax

iAccum += iUniRnd

iCount += 1

enduntil

;get the mean and output

iRnd = iAccum / 2

xout iRnd

endop

To get this completely flexible, you only have to get iMaxCount as input argument. The code for the linear distribution UDOs is quite similar. -- The next example shows these steps:

- Uniform distribution.

- Linear distribution with the precedence of lower pitches and longer durations, generated with two units.

- The same but with four units.

- Linear distribution with the precedence of higher pitches and shorter durations, generated with two units.

- The same but with four units.

- Triangular distribution with the precedence of both medium pitches and durations, generated with two units.

- The same but with six units.

Rather than using different instruments for the different distributions, the next example combines all possibilities in one single instrument. Inside the loop which generates as many notes as desired by the iHowMany argument, an if-branch calculates the pitch and duration of one note depending on the distribution type and the number of sub-units used. The whole sequence (which type first, which next, etc) is stored in the global array giSequence. Each instance of instrument "notes" increases the pointer giSeqIndx, so that for the next run the next element in the array is being read. If the pointer has reached the end of the array, the instrument which exits Csound is called instead of a new instance of "notes".

EXAMPLE 01D05_more_lin_tri_units.csd

<CsoundSynthesizer>

<CsOptions>

-d -odac -m0

</CsOptions>

<CsInstruments>

sr = 44100

ksmps = 32

nchnls = 2

0dbfs = 1

seed 0

;****SEQUENCE OF UNITS AS ARRAY****/

giSequence[] array 0, 1.2, 1.4, 2.2, 2.4, 3.2, 3.6

giSeqIndx = 0 ;startindex

;****UDO DEFINITIONS****

opcode linrnd_low, i, iii

;linear random with precedence of lower values

iMin, iMax, iMaxCount xin

;set counter and initial (absurd) result

iCount = 0

iRnd = iMax

;loop and reset iRnd

until iCount == iMaxCount do

iUniRnd random iMin, iMax

iRnd = iUniRnd < iRnd ? iUniRnd : iRnd

iCount += 1

enduntil

xout iRnd

endop

opcode linrnd_high, i, iii

;linear random with precedence of higher values

iMin, iMax, iMaxCount xin

;set counter and initial (absurd) result

iCount = 0

iRnd = iMin

;loop and reset iRnd

until iCount == iMaxCount do

iUniRnd random iMin, iMax

iRnd = iUniRnd > iRnd ? iUniRnd : iRnd

iCount += 1

enduntil

xout iRnd

endop

opcode trirnd, i, iii

iMin, iMax, iMaxCount xin

;set a counter and accumulator

iCount = 0

iAccum = 0

;perform loop and accumulate

until iCount == iMaxCount do

iUniRnd random iMin, iMax

iAccum += iUniRnd

iCount += 1

enduntil

;get the mean and output

iRnd = iAccum / iMaxCount

xout iRnd

endop

;****ONE INSTRUMENT TO PERFORM ALL DISTRIBUTIONS****

;0 = uniform, 1 = linrnd_low, 2 = linrnd_high, 3 = trirnd

;the fractional part denotes the number of units, e.g.

;3.4 = triangular distribution with four sub-units

instr notes

;how many notes to be played

iHowMany = p4

;by which distribution with how many units

iWhich = giSequence[giSeqIndx]

iDistrib = int(iWhich)

iUnits = round(frac(iWhich) * 10)

;set min and max duration

iMinDur = .1

iMaxDur = 2

;set min and max pitch

iMinPch = 36

iMaxPch = 84

;trigger as many instances of instr play as needed

iThisNote = 0

iStart = 0

iPrint = 1

;for each note to be played

until iThisNote == iHowMany do

;calculate iMidiPch and iDur depending on type

if iDistrib == 0 then

printf_i "%s", iPrint, "... uniform distribution:\n"

printf_i "%s", iPrint, "EQUAL LIKELIHOOD OF ALL PITCHES AND DURATIONS\n"

iMidiPch random iMinPch, iMaxPch ;midi note

iDur random iMinDur, iMaxDur ;duration

elseif iDistrib == 1 then

printf_i "... linear low distribution with %d units:\n", iPrint, iUnits

printf_i "%s", iPrint, "LOWER NOTES AND LONGER DURATIONS PREFERRED\n"

iMidiPch linrnd_low iMinPch, iMaxPch, iUnits

iDur linrnd_high iMinDur, iMaxDur, iUnits

elseif iDistrib == 2 then

printf_i "... linear high distribution with %d units:\n", iPrint, iUnits

printf_i "%s", iPrint, "HIGHER NOTES AND SHORTER DURATIONS PREFERRED\n"

iMidiPch linrnd_high iMinPch, iMaxPch, iUnits

iDur linrnd_low iMinDur, iMaxDur, iUnits

else

printf_i "... triangular distribution with %d units:\n", iPrint, iUnits

printf_i "%s", iPrint, "MEDIUM NOTES AND DURATIONS PREFERRED\n"

iMidiPch trirnd iMinPch, iMaxPch, iUnits

iDur trirnd iMinDur, iMaxDur, iUnits

endif

;call subinstrument to play note

event_i "i", "play", iStart, iDur, int(iMidiPch)

;increase start tim and counter

iStart += iDur

iThisNote += 1

;avoid continuous printing

iPrint = 0

enduntil

;reset the duration of this instr to make all events happen

p3 = iStart + 2

;increase index for sequence

giSeqIndx += 1

;call instr again if sequence has not been ended

if giSeqIndx < lenarray(giSequence) then

event_i "i", "notes", p3, 1, iHowMany

;or exit

else

event_i "i", "exit", p3, 1

endif

endin

;****INSTRUMENTS TO PLAY THE SOUNDS AND EXIT CSOUND****

instr play

;increase duration in random range

iDur random p3, p3*1.5

p3 = iDur

;get midi note and convert to frequency

iMidiNote = p4

iFreq cpsmidinn iMidiNote

;generate note with karplus-strong algorithm

aPluck pluck .2, iFreq, iFreq, 0, 1

aPluck linen aPluck, 0, p3, p3

;filter

aFilter mode aPluck, iFreq, .1

;mix aPluck and aFilter according to MidiNote

;(high notes will be filtered more)

aMix ntrpol aPluck, aFilter, iMidiNote, 36, 84

;panning also according to MidiNote

;(low = left, high = right)

iPan = (iMidiNote-36) / 48

aL, aR pan2 aMix, iPan

outs aL, aR

endin

instr exit

exitnow

endin

</CsInstruments>

<CsScore>

i "notes" 0 1 23 ;set number of notes per instr here

e 99999 ;make possible to perform long (exit will be automatically)

</CsScore>

</CsoundSynthesizer>

;example by joachim heintz

With this method we can build probability distributions which are very similar to exponential or gaussian distributions.5 Their shape can easily be formed by the number of sub-units used.

Scalings

Random is a complex and sensible context. There are so many ways to let the horse go, run, or dance -- the conditions you set for this 'way of moving' are much more important than the fact that one single move is not predictable. What are the conditions of this randomness?

- Which Way. This is what has already been described: random with or without history, which probability distribution, etc.

- Which Range. This is a decision which comes from the composer/programmer. In the example above I have chosen pitches from Midi Note 36 to 84 (C2 to C6), and durations between 0.1 and 2 seconds. Imagine how it would have been sounded with pitches from 60 to 67, and durations from 0.9 to 1.1 seconds, or from 0.1 to 0.2 seconds. There is no range which is 'correct', everything depends on the musical idea.

- Which Development. Usually the boundaries will change in the run of a piece. The pitch range may move from low to high, or from narrow to wide; the durations may become shorter, etc.

- Which Scalings. Let us think about this more in detail.

In the example above we used two implicit scalings. The pitches have been scaled to the keys of a piano or keyboard. Why? We do not play piano here obviously ... -- What other possibilities might have been instead? One would be: no scaling at all. This is the easiest way to go -- whether it is really the best, or simple laziness, can only be decided by the composer or the listener.

Instead of using the equal tempered chromatic scale, or no scale at all, you can use any other ways of selecting or quantising pitches. Be it any which has been, or is still, used in any part of the world, or be it your own invention, by whatever fantasy or invention or system.

As regards the durations, the example above has shown no scaling at all. This was definitely laziness...

The next example is essentially the same as the previous one, but it uses a pitch scale which represents the overtone scale, starting at the second partial extending upwards to the 32nd partial. This scale is written into an array by a statement in instrument 0. The durations have fixed possible values which are written into an array (from the longest to the shortest) by hand. The values in both arrays are then called according to their position in the array.

EXAMPLE 01D06_scalings.csd

<CsoundSynthesizer>

<CsOptions>

-d -odac -m0

</CsOptions>

<CsInstruments>

sr = 44100

ksmps = 32

nchnls = 2

0dbfs = 1

seed 0

;****POSSIBLE DURATIONS AS ARRAY****

giDurs[] array 3/2, 1, 2/3, 1/2, 1/3, 1/4

giLenDurs lenarray giDurs

;****POSSIBLE PITCHES AS ARRAY****

;initialize array with 31 steps

giScale[] init 31

giLenScale lenarray giScale

;iterate to fill from 65 hz onwards

iStart = 65

iDenom = 3 ;start with 3/2

iCnt = 0

until iCnt = giLenScale do

giScale[iCnt] = iStart

iStart = iStart * iDenom / (iDenom-1)

iDenom += 1 ;next proportion is 4/3 etc

iCnt += 1

enduntil

;****SEQUENCE OF UNITS AS ARRAY****

giSequence[] array 0, 1.2, 1.4, 2.2, 2.4, 3.2, 3.6

giSeqIndx = 0 ;startindex

;****UDO DEFINITIONS****

opcode linrnd_low, i, iii

;linear random with precedence of lower values

iMin, iMax, iMaxCount xin

;set counter and initial (absurd) result

iCount = 0

iRnd = iMax

;loop and reset iRnd

until iCount == iMaxCount do

iUniRnd random iMin, iMax

iRnd = iUniRnd < iRnd ? iUniRnd : iRnd

iCount += 1

enduntil

xout iRnd

endop

opcode linrnd_high, i, iii

;linear random with precedence of higher values

iMin, iMax, iMaxCount xin